home → local ai playbook

Local AI Playbook

Ollama, Hermes, autonomous agent companies. Cut cloud bills, own your stack, run private models at 30+ tokens per second on the GPU you already own.

3 playbooks · 15 free guides

> free_guides_

How to Fit a 26B LLM on a 16GB GPU

Q4_K_M is not the floor. Importance-matrix quantization, IQ3_M, and per-tensor tricks let you run models that 'cannot fit' your GPU with usable quality.

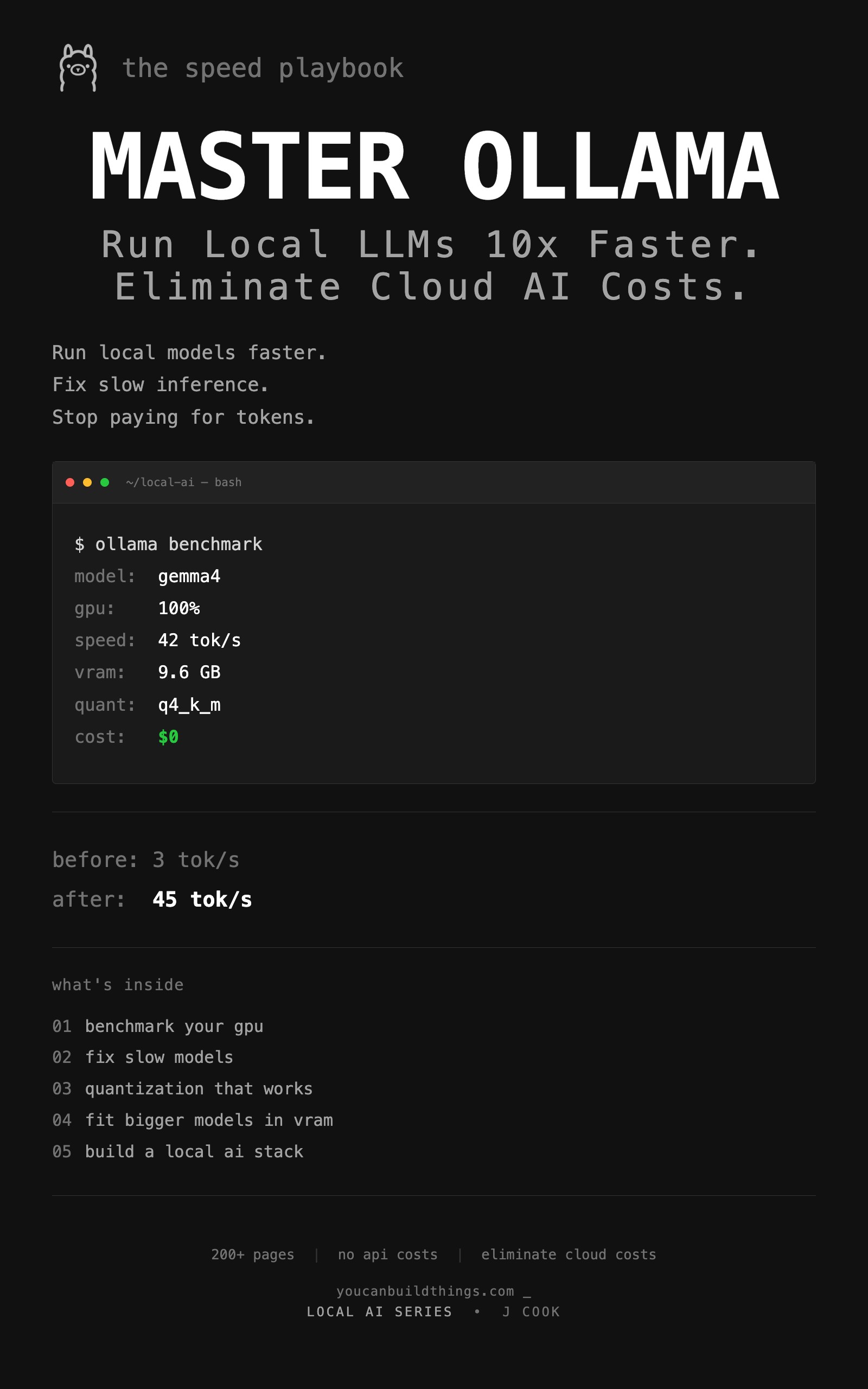

from: Master Ollama - The Speed Playbook

How Much VRAM Do You Need for a Local LLM?

The exact formula for predicting VRAM use of any local LLM, plus the KV cache table you need before you waste 20 minutes downloading a model that crashes.

from: Master Ollama - The Speed Playbook

Ollama Modelfile: 3 Templates That Beat the Defaults

Default Ollama settings produce mediocre output. These 3 ready-to-copy Modelfiles for chat, code, and analysis fix it in 2 minutes with explicit reasoning.

from: Master Ollama - The Speed Playbook

Ollama vs llama.cpp: A Head-to-Head Speed Test

All three engines use llama.cpp. Here is the head-to-head test that debunks the 'double your speed' Reddit claim and tells you which one to actually run.

from: Master Ollama - The Speed Playbook

Why Is Ollama So Slow? A 6-Step Diagnostic

Your Ollama is stuck at 3 tok/s? The priority-ordered diagnostic that finds the bottleneck in 5 minutes, with the specific fix and a tok/s test for each.

from: Master Ollama - The Speed Playbook

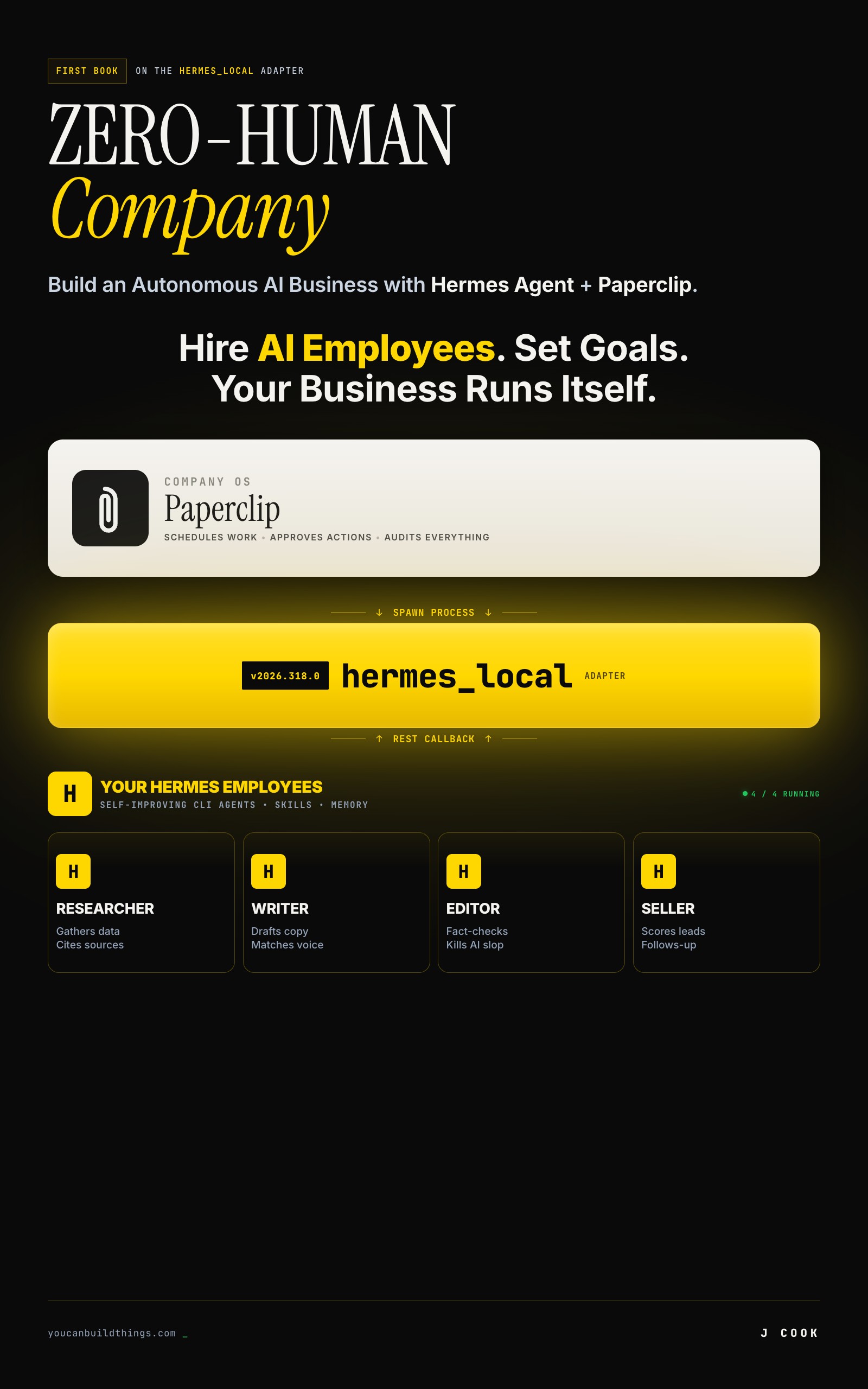

How Much Does a Hermes + Paperclip Company Cost to Run?

Real Hermes + Paperclip company costs by tier. Verified API pricing, a copy-paste cost calculator, and the $28.50/month 6-agent production setup.

from: Zero-Human Companies

8 Hermes + Paperclip Failures (and How to Fix Each One)

Why Hermes and Paperclip agents break in production. 8 failure modes from real GitHub issues with copy-paste fixes and a diagnostic function.

from: Zero-Human Companies

Build an AI Research Agency on Hermes + Paperclip

5-agent research agency on Paperclip with Hermes workers. Full JSON configs, $0.09/report cost math, and the ramp to $6K/month.

from: Zero-Human Companies

How to Set Up Hermes Agent Skills That Compound Over Time

Build a self-improving AI agent with Hermes Agent skills. Three mechanisms, real SKILL.md files, and the commands that make your agent smarter weekly.

from: Zero-Human Companies

Connect Hermes Agent to Paperclip with hermes_local

Step-by-step setup for the Paperclip hermes_local adapter. Full config schema, heartbeat loop, environment variables, and the persistSession bug workaround.

from: Zero-Human Companies

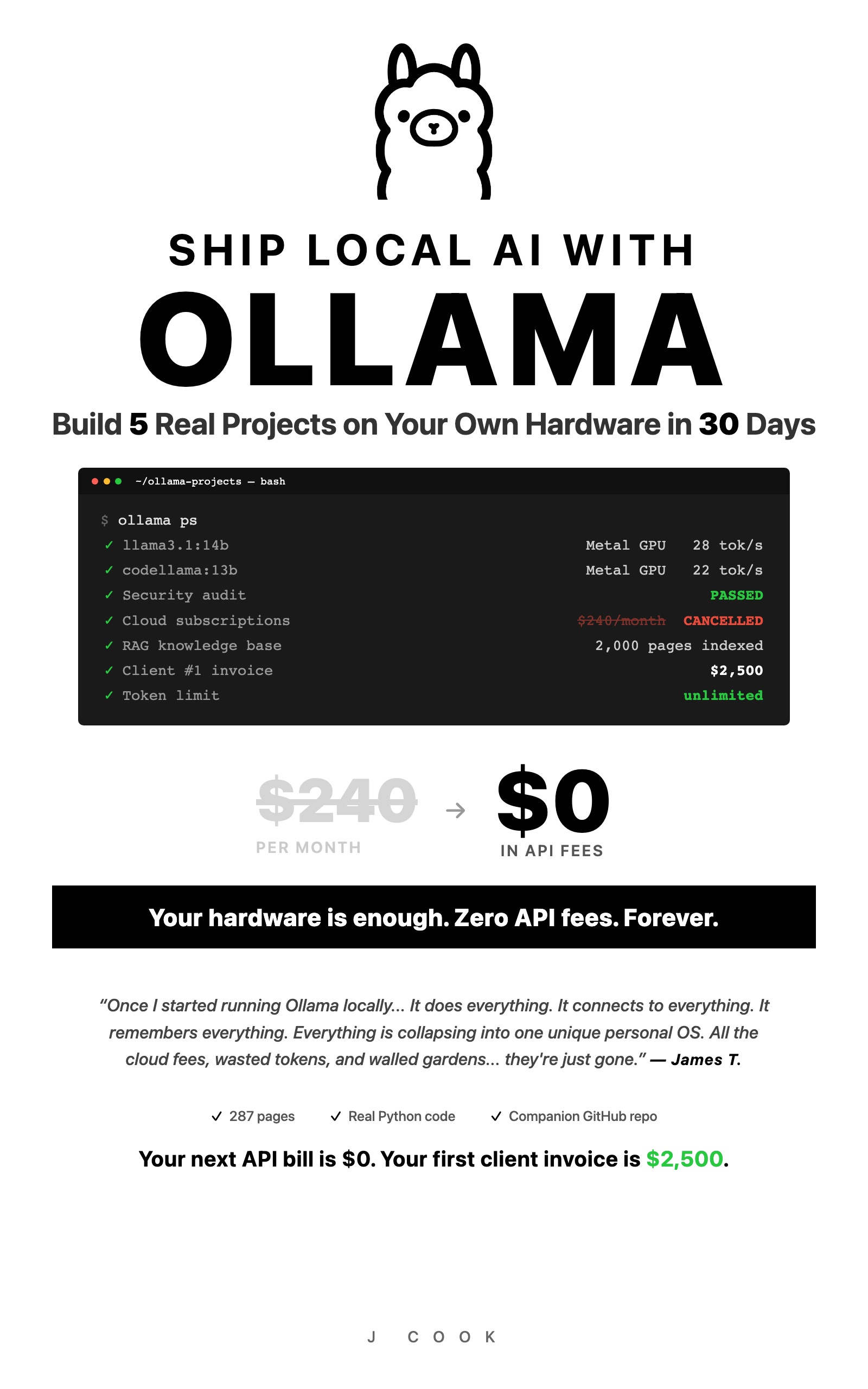

How to Replace GitHub Copilot with Ollama in VS Code

Set up a free AI coding assistant in VS Code using Ollama and Cline. Seven real workflows tested with honest quality scores compared to GitHub Copilot.

from: Ship Local AI with Ollama

How to Route Queries to Multiple Ollama Models

Build a Python router that sends coding questions to CodeLlama and general queries to Llama 3. Keyword-based and AI-powered routing strategies compared.

from: Ship Local AI with Ollama

How to Secure Your Ollama Installation

Run this 30-second audit script to catch the exact misconfiguration that exposed thousands of Ollama instances to the public internet. Copy-paste fixes included.

from: Ship Local AI with Ollama

Ollama vs ChatGPT: Honest Benchmarks from 100 Prompts

Benchmark scores comparing local Ollama models against GPT-4o across coding, writing, reasoning, and more. Data from 100 test prompts on a consumer laptop.

from: Ship Local AI with Ollama