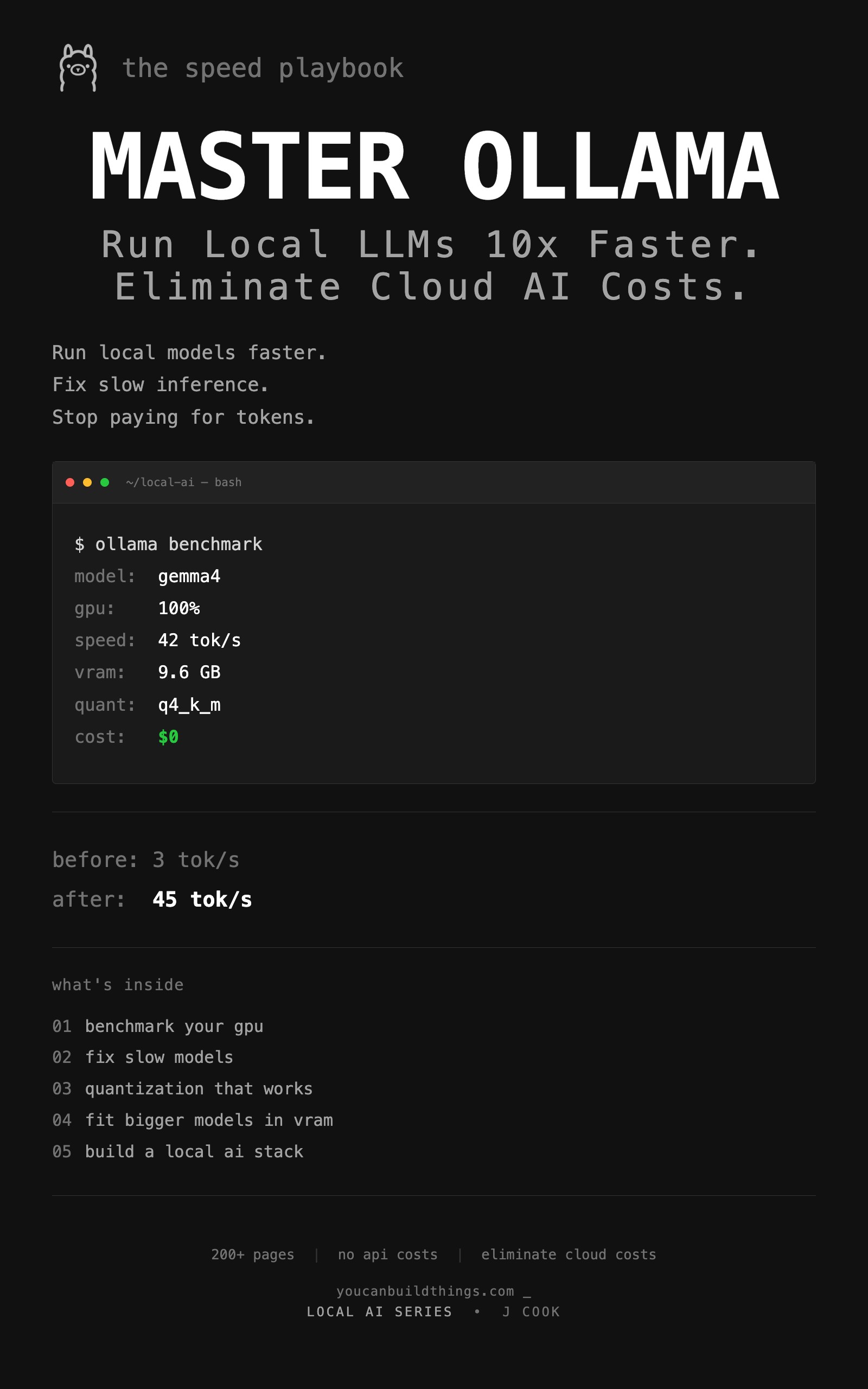

Master Ollama - The Speed Playbook

Run Local LLMs 10x Faster and Eliminate Cloud AI Costs This Weekend

You installed Ollama. Got 3 tok/s. Quit. This is the optimization playbook for people who tried local AI and bounced. Benchmark your GPU, predict VRAM in 30 seconds, fit a 26B model on 16GB, and pass the coffee test. 235 pages of measured fixes.

Stop installing Ollama and watching your GPU fan spin for thirteen minutes. The same hardware, properly configured, runs Gemma 4 26B at 30+ tok/s. The difference is not money. It is configuration. 235 pages of measured fixes.

What You'll Build

The 13-minute response problem and why your hardware is not the issue.

Gemma, Qwen, DeepSeek, Llama, Mistral, Phi — which one fits your GPU.

Real tok/s on your machine, not someone else's Reddit numbers.

Head-to-head benchmarks. The 'double your speed' claim debunked.

Three task-tuned configs that beat default settings.

The formula that ends the guessing game on which models fit.

The 6-bottleneck diagnostic flowchart in priority order.

Phone server, GPU cluster, NUC lab — three real builds.

IQ3_M and importance-matrix tricks to squeeze big models into small VRAM.

The 10-prompt framework you reuse every time a new model drops.

The honest line between local and cloud, with a decision sheet.

Evaluate any new release in 15 minutes with your benchmark suite.

Free Articles from this Book

How to Fit a 26B LLM on a 16GB GPU

Q4_K_M is not the floor. Importance-matrix quantization, IQ3_M, and per-tensor tricks let you run models that 'cannot fit' your GPU with usable quality.

from: Master Ollama - The Speed Playbook

How Much VRAM Do You Need for a Local LLM?

The exact formula for predicting VRAM use of any local LLM, plus the KV cache table you need before you waste 20 minutes downloading a model that crashes.

from: Master Ollama - The Speed Playbook

Ollama Modelfile: 3 Templates That Beat the Defaults

Default Ollama settings produce mediocre output. These 3 ready-to-copy Modelfiles for chat, code, and analysis fix it in 2 minutes with explicit reasoning.

from: Master Ollama - The Speed Playbook

Ollama vs llama.cpp: A Head-to-Head Speed Test

All three engines use llama.cpp. Here is the head-to-head test that debunks the 'double your speed' Reddit claim and tells you which one to actually run.

from: Master Ollama - The Speed Playbook

Why Is Ollama So Slow? A 6-Step Diagnostic

Your Ollama is stuck at 3 tok/s? The priority-ordered diagnostic that finds the bottleneck in 5 minutes, with the specific fix and a tok/s test for each.

from: Master Ollama - The Speed Playbook