How to Build a Self-Improving Claude Code Skill

>This covers self-improving loops. Claude Code Skills goes deeper on memory skills, skill generators, and combining all three into an autonomous system.

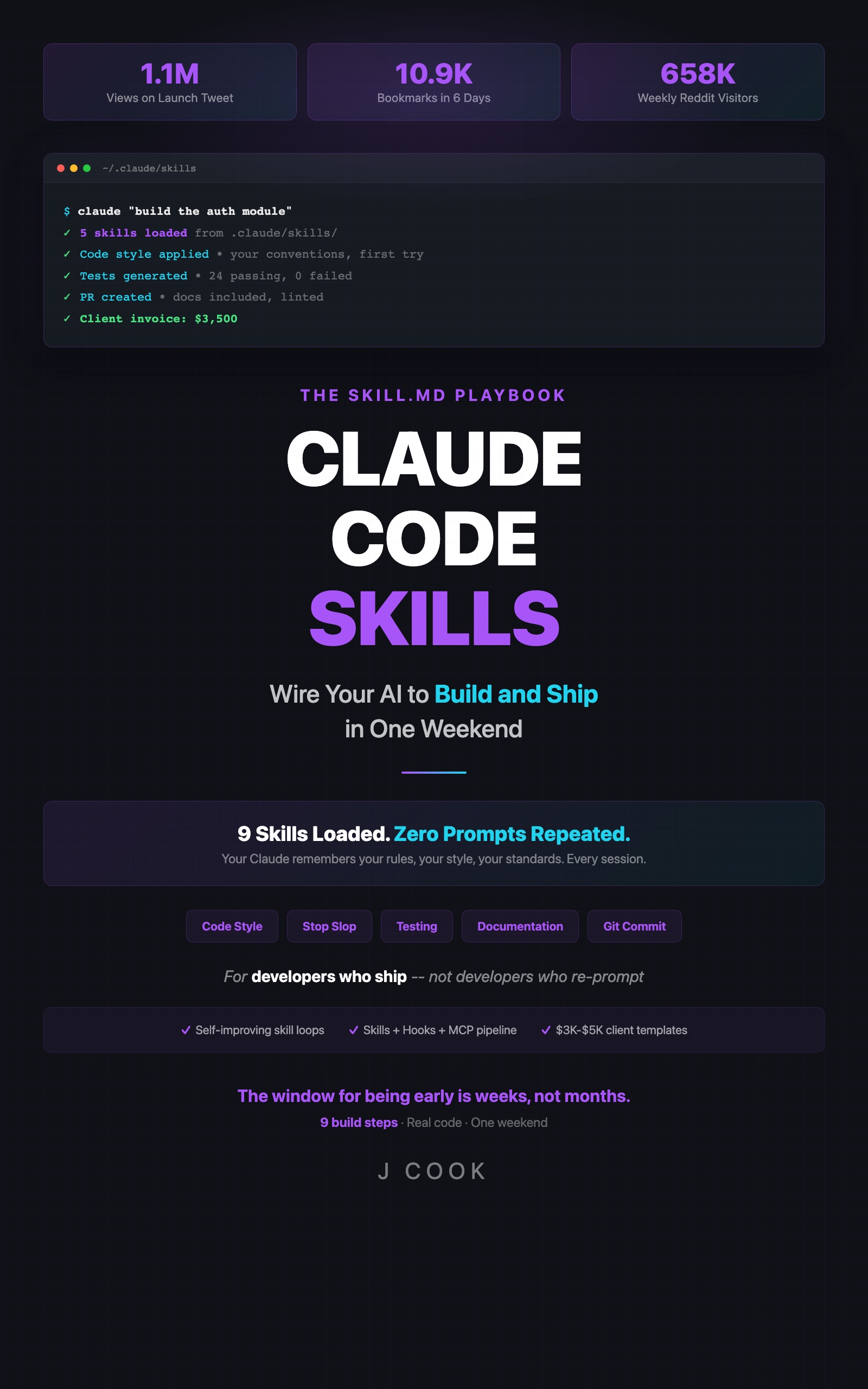

Claude Code Skills

The SKILL.md Playbook — Wire Your AI to Build and Ship in One Weekend

Summary:

- Build a skill that evaluates its own output and updates its rules automatically.

- Learn the four required components: base instructions, evaluation criteria, update rules, exit conditions.

- Get the complete self-improving code review skill template with guardrails.

- Avoid the $40 API burn with three exit condition rules.

The first version of my self-improving skill burned through $40 in API credits before I caught it. The loop had no exit condition. Claude evaluated its output, found something to improve, updated the skill, re-ran the evaluation, found something else, updated again. For 45 minutes. By the time I noticed, the skill file had grown from 20 lines to 347 lines, most of which contradicted each other. Roughly 800,000 tokens at Anthropic’s pricing.

Here’s how to build one that actually works.

What are the four parts of a self-improving skill?

A self-improving skill has base instructions, evaluation criteria, update rules, and exit conditions. Most tutorials cover the first three. The fourth is what keeps your API bill under control.

# Self-Improving Code Review

## Trigger

When reviewing code or pull requests.

## Instructions

- Focus on bugs, security, and performance (in that order)

- State each issue in one sentence

- Include a specific fix with code

- Limit to 5 highest-priority items

## Evaluation Criteria

After each review, assess:

1. Did I catch a real bug? (not just style)

2. Did my suggested fix compile and make sense in context?

3. Did I miss any obvious issues the developer later found?

## Update Rules

- If I consistently miss a category of bug (e.g., race

conditions), add that category to my priority checklist

- If a suggested fix was wrong, add the correction as an

example in the Examples section

- Maximum skill file size: 60 lines. If an update would

exceed this, remove the least-useful existing rule first.

## Exit Conditions

- Run evaluation at most once per review session

- Do not update the skill file more than once per day

- If no improvement is identified, do nothing.

Do not force an update.Save this as .claude/skills/self-improving-review.md. The critical section is Exit Conditions. Without it, you get my $40 mistake.

How does the improvement loop work in practice?

Here’s a real sequence from running this skill.

Day 1: Ask Claude to review a pull request. It catches a null reference bug and missing input validation. It misses a race condition in an async handler. You point out the race condition in chat.

Claude’s evaluation kicks in. It notes the missed race condition. The update mechanism adds “Check for race conditions in async handlers” to the priority checklist. Skill file goes from 20 to 23 lines.

Day 4: Another review. This time Claude catches a race condition in a different async handler. The improvement worked. Evaluation finds no new gaps. No update.

Day 12: Claude misses a type coercion bug in a comparison. Evaluation adds “Check type coercion in comparison operators.” Skill grows to 26 lines.

Over a month: three new patterns learned. File grew by 6 lines. Total API cost for the loop: negligible.

That’s the right speed. Slow, deliberate, bounded improvement. Not a spiral.

What broke (and how to prevent it)

Three failure modes that eat your money or your output quality.

No exit condition. Already told you what happens. The three rules (once per session, once per day, no forced updates) create natural brakes. Claude can improve the skill, but it can’t run away with it.

Too-sensitive evaluation criteria. If your criteria say “improve if anything could be better,” Claude will always find something. Make criteria specific: “improve only if a real bug was missed or a fix was incorrect.” Cosmetic improvements don’t warrant skill updates.

No size cap. Without a maximum file size, the skill grows forever. Each improvement adds lines, nothing gets removed. After 50 updates, the skill is 400 lines of accumulated rules that Claude can barely process. Set a hard cap of 60 lines. Require Claude to remove the least-useful rule before adding a new one.

def check_skill_health(skill_path: str) -> dict:

"""Quick health check for a self-improving skill file."""

with open(skill_path) as f:

lines = f.readlines()

line_count = len(lines)

has_exit = any('exit condition' in l.lower() for l in lines)

has_max_size = any('maximum' in l.lower() and 'line' in l.lower() for l in lines)

has_eval = any('evaluation' in l.lower() for l in lines)

return {

'line_count': line_count,

'over_limit': line_count > 60,

'has_exit_conditions': has_exit,

'has_size_cap': has_max_size,

'has_evaluation': has_eval,

'healthy': has_exit and has_max_size and line_count <= 60,

}

# Example usage

health = check_skill_health('.claude/skills/self-improving-review.md')

print(health)

# {'line_count': 35, 'over_limit': False, 'has_exit_conditions': True,

# 'has_size_cap': True, 'has_evaluation': True, 'healthy': True}How do you trigger the evaluation?

After Claude completes the task the skill covers, ask:

"Evaluate your output against the skill's criteria.

Should the skill be updated?"Here’s what Claude’s evaluation actually looks like:

You: Evaluate your output against the skill's criteria.

Should the skill be updated?

Claude: Evaluating against Self-Improving Code Review criteria:

1. Did I catch a real bug? YES — flagged the unvalidated

user input on line 23.

2. Did my fix compile and make sense? YES — suggested adding

zod validation with the existing schema.

3. Did I miss obvious issues? YES — I did not check for race

conditions in the async handler on line 47. The handler

reads from a shared cache without a lock.

Proposed update: Add "Check for race conditions in async

handlers that access shared state" to the Instructions

checklist, after the current item 3.

Skill file would grow from 32 to 34 lines (under 60 limit).Review the proposed change before approving. Do not auto-approve. A human check on each update prevents drift.

A r/ClaudeCode post about self-improvement loops (267 upvotes, 64 comments) inspired this pattern. The original poster’s version had no exit conditions. The comments were full of people who learned the hard way.

How much does this cost?

A standard evaluation consumes roughly 1,000-2,000 tokens. At current pricing, that’s about $0.01-$0.02 per evaluation.

| Scenario | Evaluations/week | Monthly cost |

|---|---|---|

| One self-improving skill, proper exits | 5-10 | $0.20-$0.80 |

| Five self-improving skills, proper exits | 25-50 | $1-$4 |

| One skill, no exit conditions, one runaway | 50-100 in one session | $1-$40 |

Set a budget alert on your Anthropic account for 120% of expected monthly spend. That’s your safety net.

What does the skill file look like after a month?

Day 1 (20 lines):

## Instructions

- Focus on bugs, security, and performance (in that order)

- State each issue in one sentence

- Include a specific fix with code

- Limit to 5 highest-priority itemsDay 30 (26 lines, +3 learned patterns):

## Instructions

- Focus on bugs, security, and performance (in that order)

- State each issue in one sentence

- Include a specific fix with code

- Limit to 5 highest-priority items

- Check for race conditions in async handlers

- Check type coercion in comparison operators (=== not ==)

- Verify webhook signature validation in API handlersThree lines. One month. Each line came from a real miss that the evaluation caught. Don’t make your code style skill self-improving. Binary rules like “use 2-space indentation” don’t need to learn anything. Save self-improvement for judgment calls: code review, test quality, security checks.

What should you actually do?

- If you’ve never built a self-improving skill: start with the code review template from this article. It’s the most common target and the easiest to evaluate.

- If you have one running but output got worse: revert to the last known good version with

git checkout [hash] -- .claude/skills/your-skill.md. Then check whether the exit conditions are strict enough. - If you want a second self-improving skill: testing is the next best candidate. Same four-part structure, different evaluation criteria:

## Evaluation Criteria

After generating tests, check:

1. Did any test pass trivially (assert true, assert not null)?

2. Did any test miss a clear edge case visible in the function?

3. Were any tests redundant (testing the same behavior twice)?

## Exit Conditions

- Evaluate at most once per test-generation session

- Update the skill at most once per week

- If the same gap appears 3+ times, flag it for the developer

instead of updating the skill (the problem is structural)bottom_line

- A self-improving skill is not a gimmick. It’s the difference between a tool you maintain and a tool that maintains itself.

- Exit conditions are not optional. Three rules: evaluate once per session, update once per day, skip if no improvement needed. Without these, the loop eats your API budget.

- Start with one self-improving skill on code review. Run it for a month. The file grows by single-digit lines. The quality improvement compounds.

Frequently Asked Questions

How much does a self-improving Claude skill cost in API credits?+

With proper exit conditions, about $0.05-$0.20 per week per skill. Each evaluation consumes roughly 1,000-2,000 tokens. Without exit conditions, a single runaway loop can burn $40+ in one session.

Can a self-improving skill make Claude worse?+

Yes, if the evaluation criteria are too sensitive or there's no file size cap. The skill grows with contradictory rules and output quality drops. Set a 60-line maximum and require removing the least-useful rule before adding a new one.

How often should a self-improving skill update itself?+

At most once per day. Evaluate at most once per session. If no improvement is needed, do nothing. Slower, deliberate improvement beats rapid iteration that spirals.

More from this Book

5 Claude Code Skills Every Developer Should Build

Five copy-paste SKILL.md files that fix sloppy AI output: Stop Slop, Testing Standards, Documentation, Git Commits, and Code Review. Full code inside.

from: Claude Code Skills

How to Automate Code Review with Claude Code Hooks

Wire a pre-commit hook to a Claude Code review skill that caught 14 bugs in two months. Complete JSON config, skill file, and two bonus hooks included.

from: Claude Code Skills

Why Your Claude Code Skills Aren't Working (7 Fixes)

Seven failure modes that break Claude Code skills, with fix commands for each. Includes a real 5-minute debugging session and 7-point troubleshooting checklist.

from: Claude Code Skills