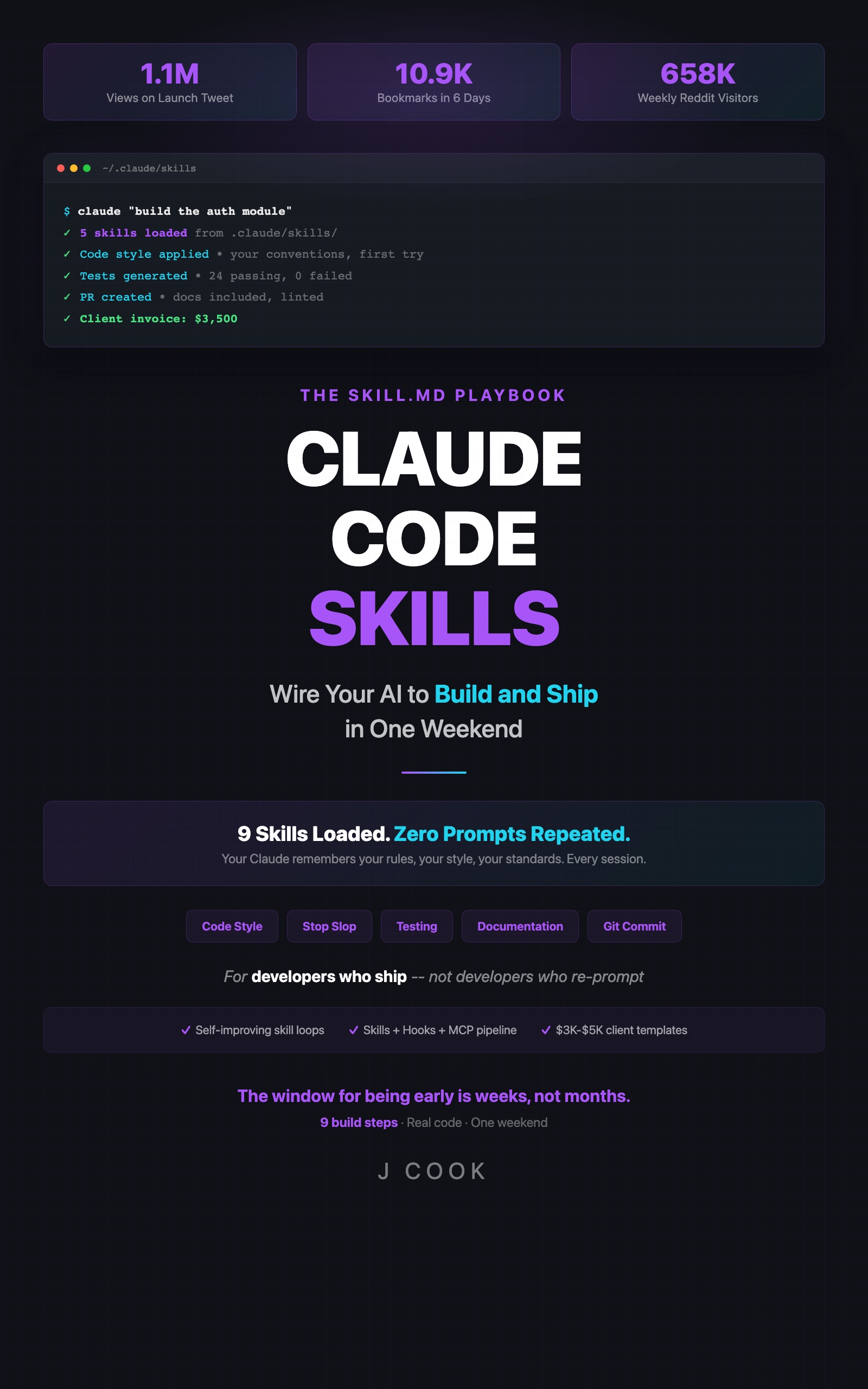

5 Claude Code Skills Every Developer Should Build

>This covers the starter five. Claude Code Skills goes deeper on self-improving loops, architecture for 50+ skills, and turning your library into income.

Claude Code Skills

The SKILL.md Playbook — Wire Your AI to Build and Ship in One Weekend

Summary:

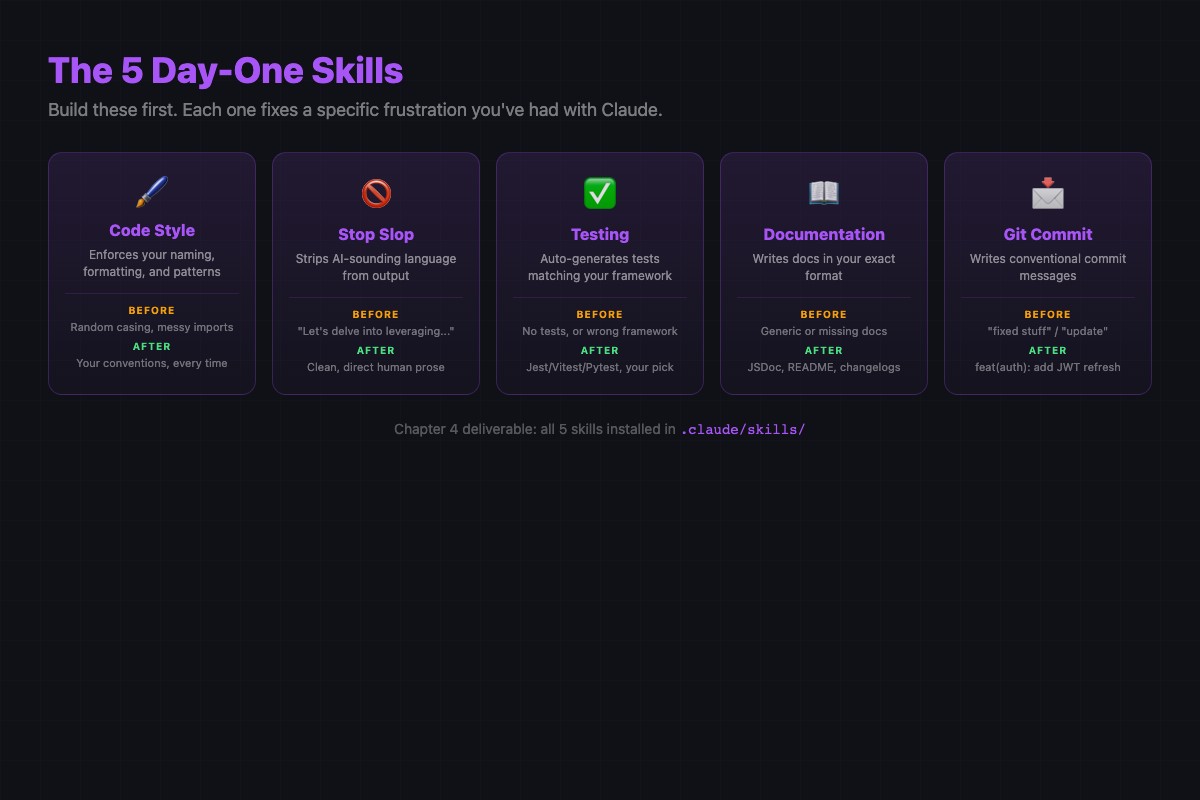

- Five copy-paste skill files that fix the most common Claude Code output problems.

- Each skill targets one concern: generic code, weak tests, useless docs, vague commits, shallow reviews.

- Before/after comparison showing all five firing on a single prompt.

- Complete markdown content for each skill, ready to drop into

.claude/skills/.

The awesome-claude-skills repo (47.9k stars) tracks what the community actually builds. Here’s how the 70+ community skills break down:

| Category | Community skills |

|---|---|

| Development & Code Tools | 23 |

| Productivity & Organization | 8 |

| Communication & Writing | 7 |

| Creative & Media | 7 |

| Business & Marketing | 5 |

Development tools dominate because that’s where the pain is sharpest. The five skills below target the same category. An advanced skill that takes 30 minutes to set up and produces subtle improvements is cool. A basic skill that takes 5 minutes and immediately makes your code cleaner is money.

How does the Stop Slop skill work?

Stop Slop strips the machine-generated feel from Claude’s code output. Generic variable names, restated comments, placeholder data. This skill kills all of it.

# Stop Slop

## Trigger

Always active.

## Instructions

- Variable names must be specific to their content.

Bad: data, result, response, item, temp

Good: userProfile, invoiceTotal, apiResponse, cartItem

- Do not write comments that restate the code.

Bad: // Loop through the array

Good: // Filter out users who haven't verified their email

- Do not generate placeholder data. Use realistic values.

Bad: "John Doe", "test@test.com", "123 Main St"

Good: "Priya Sharma", "priya.sharma@fastmail.com"

- Prefer early returns over nested if/else chains.

- Never generate code that "demonstrates the concept."

Generate code that solves the actual problem.

## Constraints

- If unsure about domain terminology, ask. Don't default

to generic names.

- Do not add TODO comments. Implement the feature or explain

what's missing in your response text.Save as .claude/skills/stop-slop.md. This is the single highest-impact skill for code quality.

How does the Testing Standards skill work?

Testing Standards makes Claude write tests in your framework with your assertion patterns. Without it, Claude guesses the framework and writes weak assertions.

# Testing Standards

## Trigger

When writing or modifying test files.

## Instructions

- Framework: Vitest (import from 'vitest', not 'jest')

- Test file location: mirror source path

src/utils/format.ts -> tests/utils/format.test.ts

- Structure: describe() grouped by function, it() per case

- Naming: it('should [behavior] when [condition]')

- Each function gets minimum 3 tests:

1. Happy path with typical input

2. Error/edge case (null, empty, boundary)

3. Integration behavior with dependencies

- Assert on specific values, not truthiness

Bad: expect(result).toBeTruthy()

Good: expect(result).toBe('validated')

## Constraints

- Do not import from 'jest' or '@jest/globals'

- Do not use snapshot tests unless explicitly asked

- Do not test private functions directlyThe key line: “assert on specific values, not truthiness.” That single rule catches the laziest AI testing pattern: tests that pass but prove nothing.

Python variant (swap Vitest for pytest):

# Testing Standards

## Trigger

When writing or modifying test files.

## Instructions

- Framework: pytest (never use unittest)

- Test file location: mirror source path

src/utils/format.py -> tests/utils/test_format.py

- Naming: test_validate_email_rejects_missing_domain

- Each function gets minimum 3 tests

- Use parametrize for 3+ similar test cases

- Assert on specific values

Bad: assert result

Good: assert result == 'validated'

## Constraints

- Do not import from unittest

- Do not use mock.patch unless dependency is external (API, DB)How does the Documentation skill work?

The Documentation skill sets rules for when Claude documents and when it shuts up. Without it, Claude writes 200-word JSDoc blocks for one-line helpers and skips docs entirely for complex public APIs.

# Documentation

## Trigger

When writing or modifying source files.

## Instructions

- Add JSDoc to all exported functions and classes

- Required tags: @param (with type), @returns, @throws

- Add @example for any function with non-obvious usage

- Skip documentation for:

- Private helpers under 10 lines

- Obvious getters/setters

- Test files

- Inline comments: only when the WHY is non-obvious.

Never comment WHAT the code does.

## Example

Bad:

/** Gets the user */

export function getUser(id: string): User

Good:

/**

* Fetches user profile from cache, falling back to DB

* if cache entry is stale (>5 min).

* @param id - UUID of the user

* @returns User profile, or null if not found

* @throws {DatabaseError} If connection pool exhausted

*/

export async function getUser(id: string): Promise<User | null>Before this skill, I spent half my code review time asking Claude to either add documentation or remove useless comments. After: present where it matters, absent where it doesn’t. The “never comment WHAT” constraint alone eliminated 80% of noise comments.

How does the Git Commits skill work?

The Git Commits skill enforces conventional commit format on every commit Claude creates. Twenty lines, takes effect immediately.

# Git Commits

## Trigger

When creating commits or writing commit messages.

## Instructions

- Format: type(scope): short description

- Types: feat, fix, refactor, test, docs, chore, perf

- Scope: module or feature area (auth, api, ui)

- Description: imperative mood, lowercase, no period, max 72 chars

- Body (if needed): blank line after subject, explain WHY not WHAT

## Constraints

- Never use: "update", "fix", "changes", "misc",

"various improvements", "minor fixes", "WIP"

- Never include file lists in the commit subject

- If change touches 3+ unrelated areas, split commitsWithout this, Claude’s commit messages alternate between “update files” and three-paragraph essays. Neither is useful in a git log. This skill turns Updated some stuff in the auth module into fix(auth): reject tokens with expired refresh claims.

How does the Code Review skill work?

The Code Review skill makes Claude review like a senior engineer who respects your time. No manufactured praise. Problems, risks, and fixes.

# Code Review

## Trigger

When reviewing code, pull requests, or diffs.

## Instructions

- Priority order:

1. Bugs and correctness issues

2. Security concerns

3. Performance (only if measurable impact)

4. API design and naming

5. Style (only if not caught by linter)

- For each issue:

- State problem in one sentence

- Explain the risk (what breaks, when)

- Suggest specific fix with code

- If code is fine, say "No issues found" and stop.

Do not manufacture feedback.

## Constraints

- Do not comment on formatting if a linter handles it

- Do not suggest refactoring that changes public API

without flagging as breaking change

- Limit to 5 highest-priority itemsThe constraint “If code is fine, say ‘No issues found’ and stop” is the key line. Claude’s default review mode includes “Great use of the factory pattern here!” which wastes time and feels artificial.

What happens when all five fire at once?

One prompt: “Write a function that calculates cart total including tax and shipping, write tests, document it, and create a commit message.”

Without skills:

// Calculates the total

export default function calculateTotal(items: any[], tax: number, shipping: number) {

var total = 0;

for (var i = 0; i < items.length; i++) {

total += items[i].price * items[i].quantity;

}

return total + (total * tax) + shipping;

}

// Test

test('should work', () => {

expect(calculateTotal([{price: 10, quantity: 2}], 0.08, 5)).toBeTruthy()

})

// Commit: "Add calculateTotal function"any[]. var. Default export. Useless comment. Jest. Truthiness assertion. Vague commit.

With all five skills active:

/**

* Calculates cart total including tax and flat-rate shipping.

* @param cartItems - Array of items with priceUsd and quantity

* @param taxRatePercent - Tax rate as decimal (0.08 = 8%)

* @param shippingCostUsd - Flat shipping cost

* @returns Total in USD, rounded to 2 decimal places

*/

export const calculateCartTotal = (

cartItems: CartItem[],

taxRatePercent: number,

shippingCostUsd: number,

): number => {

const subtotalUsd = cartItems.reduce(

(sum, item) => sum + item.priceUsd * item.quantity, 0,

)

const taxAmountUsd = subtotalUsd * taxRatePercent

return Math.round((subtotalUsd + taxAmountUsd + shippingCostUsd) * 100) / 100

}

// tests/cart/calculate-cart-total.test.ts

import { describe, it, expect } from 'vitest'

describe('calculateCartTotal', () => {

it('should return subtotal plus tax plus shipping', () => {

const items = [{ priceUsd: 20, quantity: 2 }]

expect(calculateCartTotal(items, 0.08, 5.99)).toBe(49.19)

})

it('should return only shipping for empty cart', () => {

expect(calculateCartTotal([], 0.08, 5.99)).toBe(5.99)

})

it('should handle zero tax rate', () => {

expect(calculateCartTotal([{ priceUsd: 10, quantity: 1 }], 0, 0)).toBe(10)

})

})

// Commit: feat(cart): add total calculation with tax and shippingNamed exports. Typed params. Variable names with units. Vitest with value assertions. Full JSDoc. Conventional commit.

Same prompt. Five skills. The difference between output you’d rewrite and output you’d ship.

What broke when building these?

Two mistakes that cost people the most time:

Building all five at once. When you add 5 skills simultaneously and output improves, you don’t know which skill made the difference. Add one, test it, confirm it works, then add the next. Sequential approach takes 15 extra minutes but saves hours of debugging when something acts up and you can’t identify the cause.

Making skills too long. First version of each skill should be 15-25 lines. Not 50, not 80. Short skills are easier to test, easier to debug, and followed more reliably by Claude. Skills over 40 lines produce less reliable output than shorter, focused ones.

How to verify each skill works: Same prompt, three runs. Once with the skill active. Once with it renamed to .md.bak. Once renamed back. If all three look the same, the skill is too vague.

What should you actually do?

- If you have zero skills: build Stop Slop first. It has the most visible impact. Then Testing Standards. Then the other three.

- If you have a few skills but output is inconsistent: check for conflicts. Run

grep -r "import" .claude/skills/and replace “import” with whatever rule is being inconsistent. Two files mentioning the same topic with different rules is your conflict. - If all five work and you want more: Chapter 5 of the book covers the folder architecture for scaling from 5 to 50+ skills without conflicts.

bottom_line

- Five skills in a folder. That’s the difference between “Claude is a fancy autocomplete” and “Claude is the best junior developer I’ve ever worked with.”

- Stop Slop is the single highest-ROI skill. Build it first, test it, feel the difference.

- Every rule in your skill should be testable with a yes/no question. If it requires interpretation, Claude will interpret it differently than you expect.

Frequently Asked Questions

What are the best Claude Code skills to start with?+

Stop Slop, Testing Standards, Documentation Patterns, Git Commits, and Code Review. These five cover the full development cycle and produce the biggest immediate improvement.

How many skills do I need before Claude feels different?+

Three is the minimum viable setup: code style, stop slop, and testing. Most developers see an immediate improvement. Six to ten skills covers the full development cycle.

Do Claude Code skills work with Python or just TypeScript?+

Skills are language-agnostic markdown. The structure is identical for any language. Swap Vitest for pytest, JSDoc for docstrings, and the same patterns apply.

More from this Book

How to Automate Code Review with Claude Code Hooks

Wire a pre-commit hook to a Claude Code review skill that caught 14 bugs in two months. Complete JSON config, skill file, and two bonus hooks included.

from: Claude Code Skills

How to Build a Self-Improving Claude Code Skill

Build a Claude Code skill that evaluates its own output and updates itself. Complete template with exit conditions so you don't burn $40 in API credits.

from: Claude Code Skills

Why Your Claude Code Skills Aren't Working (7 Fixes)

Seven failure modes that break Claude Code skills, with fix commands for each. Includes a real 5-minute debugging session and 7-point troubleshooting checklist.

from: Claude Code Skills