Set Up Claude Code Review on Every PR for $0/Month

>This covers automated PR review. Ship It with Claude Code goes deeper on CLI automation, MCP, and building production SaaS with Claude Code.

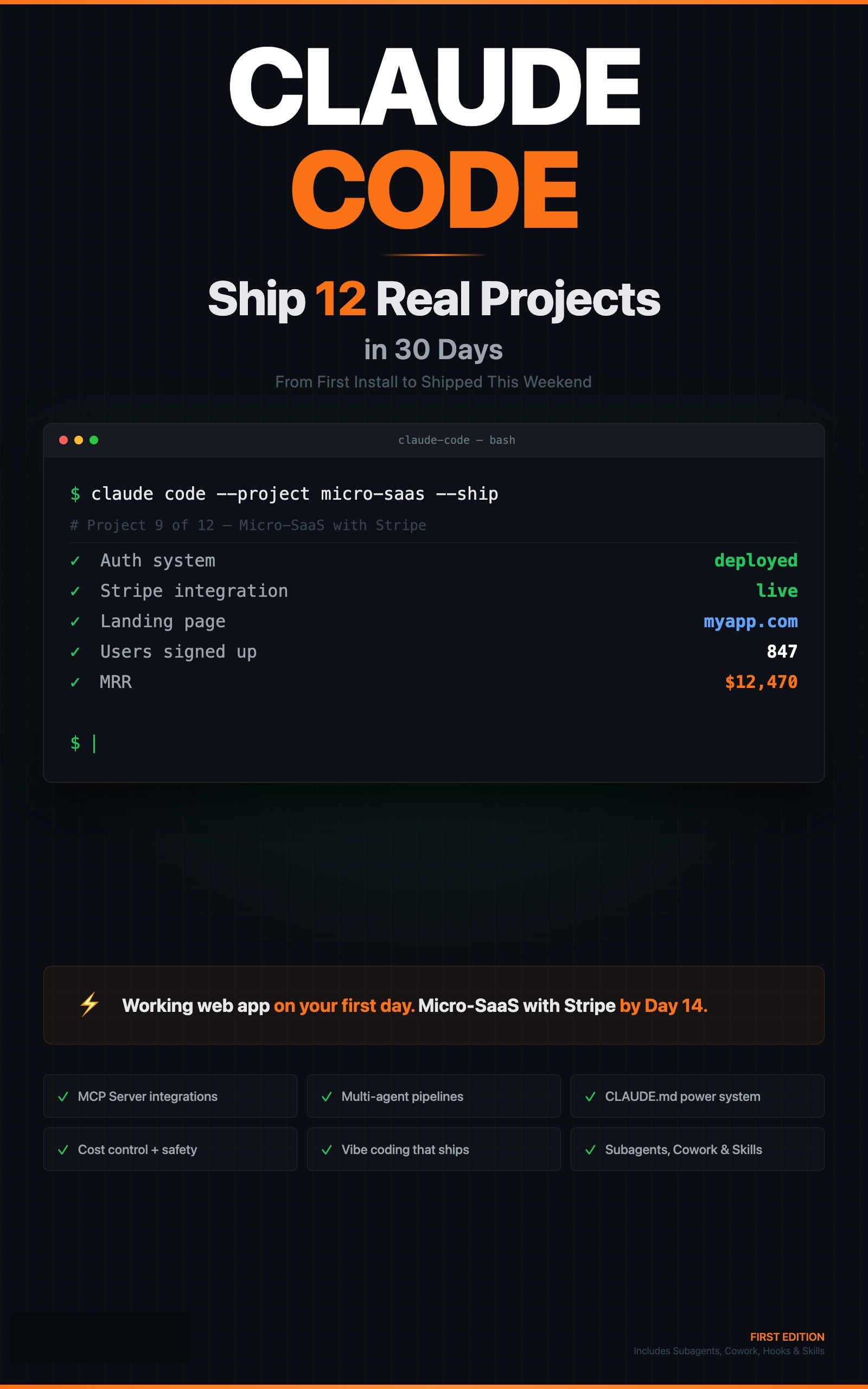

Ship It with Claude Code

How Developers Are Shipping SaaS Apps and Automating Their Jobs in a Weekend

Summary:

- Set up automated Claude Code review on every pull request using GitHub Actions.

- Custom review-rules.md that encodes your team’s hard-won patterns, not generic best practices.

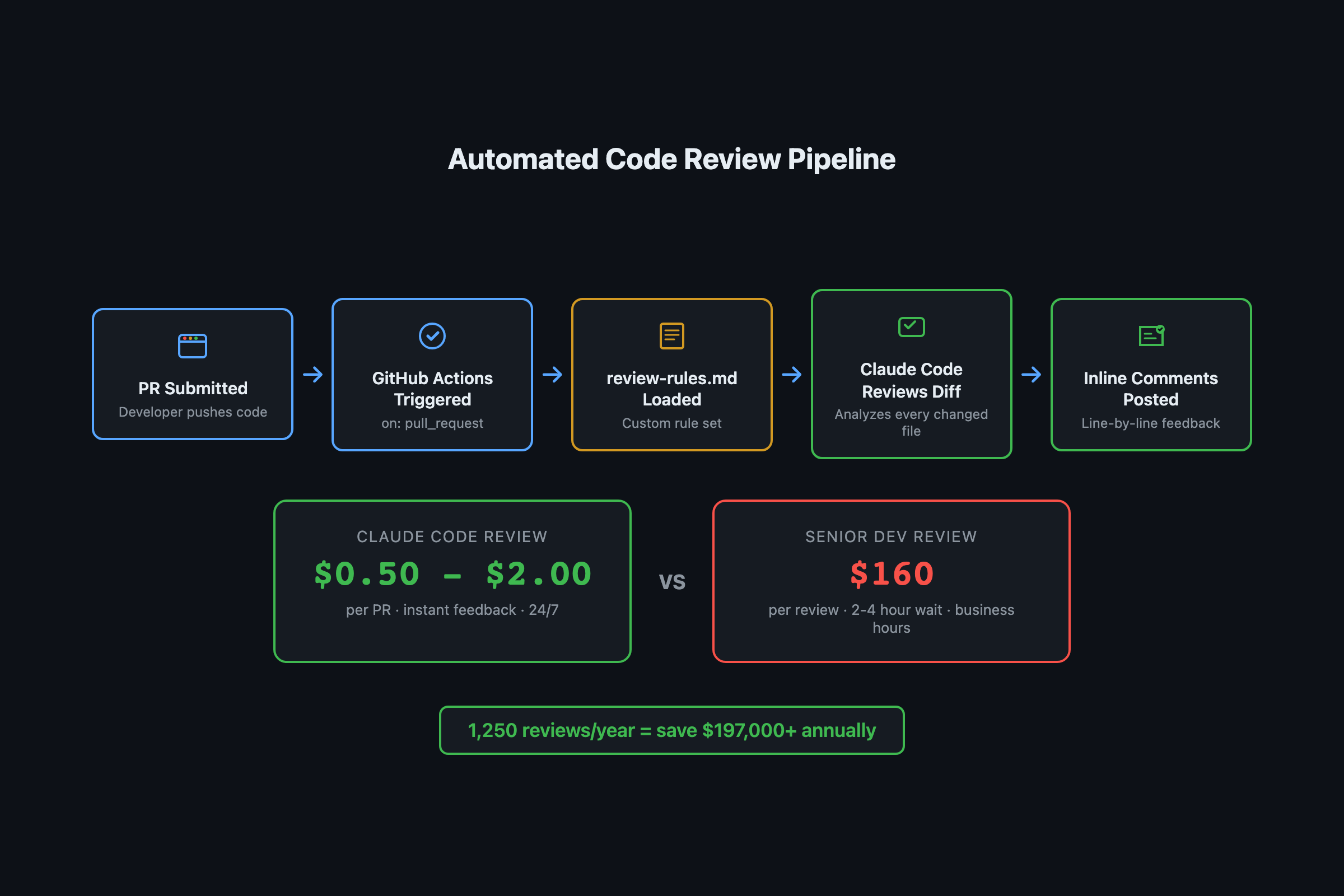

- Cost: $0.50-2.00 per PR vs $160 per review in senior developer salary.

- Copy-paste YAML workflow, review rules template, and project-specific rule examples.

A senior developer costs roughly $200K per year fully loaded. That developer reviews maybe 5 PRs per day across 250 working days: 1,250 reviews. That is $160 per review in salary costs alone, before you factor in context-switching time and the reviews that get rubber-stamped at 5 PM on a Friday.

Claude Code Review doesn’t get tired. Doesn’t rubber-stamp. It applies the same rigor to the last PR of the day as the first one.

How do you set up the GitHub Action?

Three files. A workflow YAML, a review rules file, and an API key in GitHub Secrets.

Step 1: Create the workflow at .github/workflows/code-review.yml

name: Claude Code Review

on:

pull_request:

types: [opened, synchronize]

jobs:

review:

runs-on: ubuntu-latest

permissions:

contents: read

pull-requests: write

steps:

- uses: actions/checkout@v4

with:

fetch-depth: 0

- name: Install Claude Code

run: npm install -g @anthropic-ai/claude-code

- name: Review PR

env:

ANTHROPIC_API_KEY: ${{ secrets.ANTHROPIC_API_KEY }}

GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}

run: |

claude code-review \

--github-pr "${{ github.event.pull_request.number }}" \

--repo "${{ github.repository }}"That triggers on every PR open and every push to an existing PR. The fetch-depth: 0 gives Claude Code full git history so it can understand the diff in context.

Privacy note: Your code diffs are sent to the Anthropic API for review. Do not enable this on repositories containing secrets, credentials, or sensitive business logic that should not leave your infrastructure.

Step 2: Add your API key to GitHub Secrets

Go to your repo Settings > Secrets and variables > Actions > New repository secret. Name it ANTHROPIC_API_KEY, paste your key. The GITHUB_TOKEN is provided automatically by GitHub Actions.

Step 3: Create .claude/review-rules.md

# Code Review Rules

## Always Check

- All new functions must have type annotations (Python) or TypeScript types

- No raw SQL strings. Use parameterized queries only.

- No secrets or API keys in code. Must use environment variables.

- All new API endpoints must have corresponding tests

- Error messages must not expose internal implementation details

## Style

- Follow conventions in CLAUDE.md

- Max function length: 40 lines

- Max file length: 400 lines

- Prefer early returns over nested if/else

## Performance

- Flag any database query inside a loop (potential N+1)

- Flag any synchronous I/O in async functions

- Flag unbounded queries (missing LIMIT clause)

## Security

- Flag any use of eval(), exec(), or dynamic code execution

- Flag any file path construction from user input

- Flag missing authentication checks on new endpoints

- Flag missing rate limiting on public endpointsWhat makes custom review rules better than defaults?

Generic rules catch generic problems. The review rules that actually prevent regressions are the ones specific to your project.

Look at your team’s past bugs. Every resolved bug is a potential review rule. Here is a real example from the book: a project had a test isolation bug because developers were making direct ORM calls in route handlers instead of going through the service layer. That became this rule:

## Project-Specific

- Flag any direct ORM/database model imports in route handler files.

All data access must go through the service layer (src/services/).

Reference: memory.md 2026-02-15 test isolation bug.If you are using Claude Code’s memory files (.claude/memory.md), your anti-patterns section is a goldmine of review rules. Every pattern you learned the hard way becomes an automated check that prevents the same mistake from recurring.

def generate_review_rules(memory_file: str) -> list[str]:

"""

Turn memory.md anti-patterns into review rules.

Read your .claude/memory.md and extract patterns.

"""

rules = []

# Example anti-patterns from memory.md

anti_patterns = {

"direct ORM in routes": "Flag ORM imports in src/api/routes/",

"missing LIMIT on queries": "Flag SELECT without LIMIT clause",

"console.log in production": "Flag console.log in non-test files",

"bare except": "Flag except without specific exception type",

}

for pattern, rule in anti_patterns.items():

rules.append(f"- {rule} (learned from: {pattern})")

return rules

# Output:

# - Flag ORM imports in src/api/routes/ (learned from: direct ORM in routes)

# - Flag SELECT without LIMIT clause (learned from: missing LIMIT on queries)What broke when I first set this up?

The first version reviewed every file in the repository, not just the changed files. On a repo with 200+ files, the review took 8 minutes and cost $4.50 per PR. The fix was the fetch-depth: 0 combined with Claude Code’s built-in diff detection, which limits review to the actual changes. Review time dropped to under 2 minutes and cost to $0.50-1.50 per PR.

How do you reduce false positives?

Three patterns trigger the most noise: generated files (Prisma client, compiled output), test fixtures with intentional bad patterns, and config files with long lines. Add exclusions to your rules:

exclude_patterns:

- "*.generated.*"

- "**/fixtures/**"

- "prisma/client/**"The second gotcha: Claude Code posted review comments on generated files (lock files, build artifacts). Add this to your review rules:

## Ignore

- Skip files matching: *.lock, *.min.js, dist/*, build/*, node_modules/*

- Skip auto-generated migration files unless they contain raw SQLHow does the cost compare to human reviewers?

From GitHub’s Octoverse data, the median time to merge a PR increased 16% in 2024, partly because review bottlenecks slow teams down as they scale.

Here is the math. At $200K/year fully loaded for a senior developer reviewing 5 PRs per day:

| Metric | Human Reviewer | Claude Code Review |

|---|---|---|

| Cost per review | ~$160 | $0.50-2.00 |

| Reviews per day | 5 | Unlimited |

| Friday 5 PM quality | Drops | Consistent |

| Convention enforcement | Inconsistent | Exact match to rules |

| Catches N+1 queries | Sometimes | Every time (if in rules) |

| Architecture judgment | Strong | Weak |

| Mentorship value | High | None |

Look, the point isn’t replacing human reviewers. It’s letting Claude Code handle the mechanical checks (convention enforcement, security patterns, test coverage verification) so human reviewers can focus on architecture decisions and mentoring junior developers.

Cost control: Cap the diff size sent to Claude. PRs touching 50+ files or 2,000+ lines should get a summary review, not line-by-line. Add

--max-tokens 4096to limit spending on monster PRs.

What should you actually do?

- If you have a solo project: set up the GitHub Action with the generic review rules above. Total setup time is under 10 minutes.

- If you have a team: start with generic rules, then spend 30 minutes converting your

.claude/memory.mdanti-patterns into project-specific review rules. The project-specific rules are where the real value lives. - If you are worried about cost: a team pushing 10 PRs per day at $1 average per review spends $200/month. One caught bug that would have taken 4 hours to debug pays for the entire month.

bottom_line

- Automated code review is not about removing humans. It is about making sure the boring checks happen every single time, so humans focus on decisions that need judgment.

- Project-specific review rules derived from your memory files and past bugs are 10x more valuable than generic “check for console.log” rules.

- At $0.50-2.00 per PR, the ROI is obvious. One prevented production bug pays for months of automated review.

Frequently Asked Questions

How much does Claude Code Review cost per pull request?+

Roughly $0.50-2.00 per PR depending on diff size. Compare that to $160 per review in senior developer salary costs (at $200K/year, 1,250 reviews/year).

Can Claude Code Review auto-approve pull requests?+

It can post inline comments and request changes, but you should never let it auto-merge. Use it for the pattern matching and convention enforcement, keep humans on architecture and business logic decisions.

Does Claude Code Review work with private repositories?+

Yes. The GitHub Action runs inside your Actions runner with your repository checkout. Your code stays in your CI environment. You need an Anthropic API key stored as a GitHub Secret.

More from this Book

Build a Micro-SaaS with Claude Code This Weekend

Ship a URL monitoring SaaS with auth, Stripe payments, and Docker deployment using Claude Code's 7-phase build workflow. Copy-paste prompts for each phase.

from: Ship It with Claude Code

How to Run Claude Code on a Schedule (3 Automations)

Set up nightly code review, weekly dependency audit, and daily test coverage with Claude Code's -p flag and cron. Copy-paste prompt files included.

from: Ship It with Claude Code

How to Connect Claude Code to Your Database with MCP

Connect Claude Code to SQLite and PostgreSQL via MCP servers. Query schemas, find unused columns, and analyze data without leaving your terminal session.

from: Ship It with Claude Code

5 Ways Claude Code Will Burn You (And How to Survive)

Five Claude Code failure modes from real builds: hallucinated APIs, bad architecture, context degradation, security gaps, and the it-works trap. Fixes for each.

from: Ship It with Claude Code