How to Analyze Messy Spreadsheets with Claude

>This covers data analysis. Claude Cowork - Automate Your Job This Weekend adds email triage, recurring tasks, complete workflows, and three paths to income with your Cowork setup.

Claude Cowork - Automate Your Job This Weekend

50+ Automations for Email, Spreadsheets, Reports, and Daily Tasks Using Claude Computer Use

Summary:

- Turn a messy CSV into a clean dataset with a documented cleaning log.

- Generate a professional analysis report a non-technical person can read.

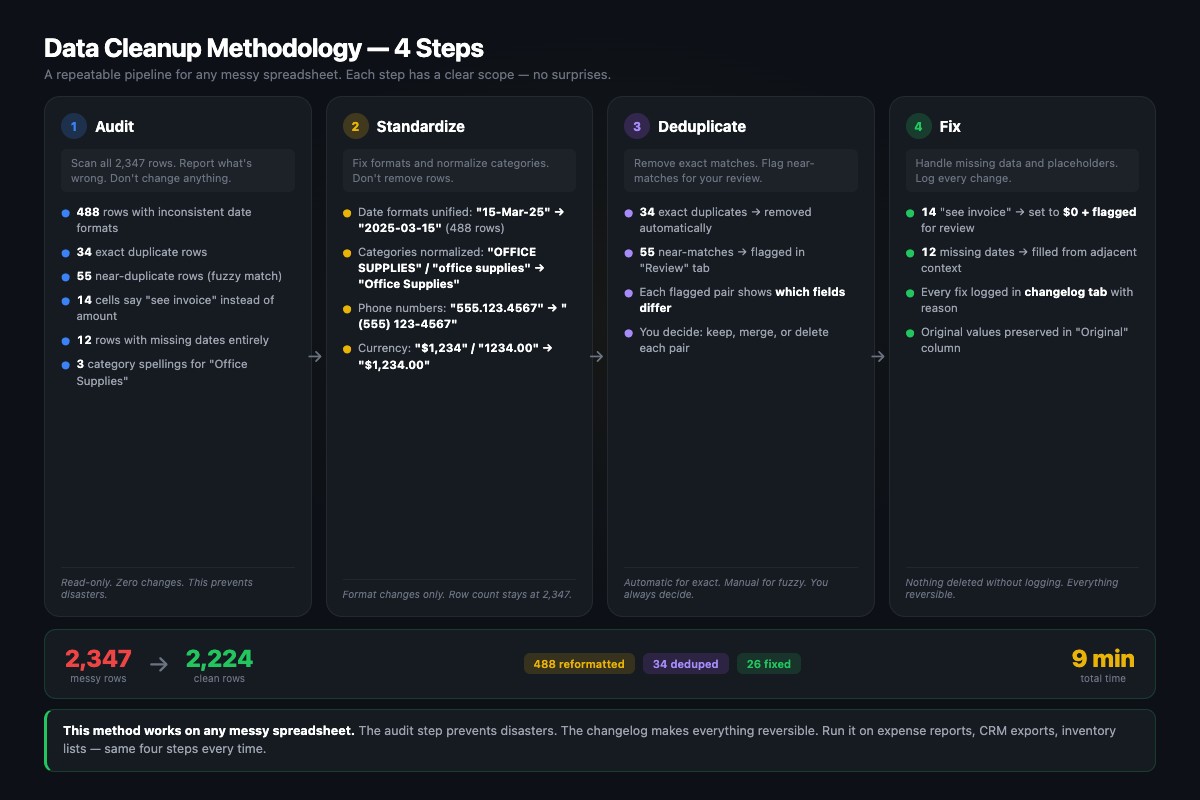

- Copy-paste prompts for the three-step pipeline: audit, clean, report.

- Real example: 2,347 rows of chaos cleaned in 9 minutes.

A client sent me a CSV with 2,347 rows of transaction data. Mixed date formats in the same column (MM/DD/YYYY next to DD-Mon-YY). 89 duplicate entries. 14 cells in the “Amount” column that said “see invoice” instead of containing a number. I used to spend 3-4 hours cleaning spreadsheets like this manually. Claude did it in 9 minutes.

What’s the three-step pipeline?

Same audit-first pattern that prevents every data disaster: observe, then review, then act.

| Step | What Claude does | Time | What you do |

|---|---|---|---|

| 1. AUDIT | Counts rows, finds issues, reports | 2-3 min | Review the audit, make decisions |

| 2. CLEAN | Applies YOUR rules, logs every change | 5-7 min | Nothing (review log after) |

| 3. REPORT | Generates plain-English analysis | 3-4 min | Read and send to client/boss |

The audit step costs 2-3 minutes. It saves you from the equivalent of renaming your tax documents “Miscellaneous_Item_47” (yes, that happened when I skipped the audit step).

How do you run the data quality audit?

Save your CSV to your Desktop (or any folder in Claude’s File Access list). Then paste this prompt into Cowork:

Read the file test-data.csv on my Desktop. Audit the data

quality. Report:

- Total rows and columns with names

- Data type of each column (dates, numbers, text)

- Any issues: duplicates, missing values, inconsistent

formats, outliers, invalid entries

Do not fix anything yet. Just report what you find.

Save the audit to data-audit.txt on my Desktop.For 2,347 rows, this takes 2-3 minutes. The audit comes back looking like this:

DATA QUALITY AUDIT - test-data.csv

Rows: 2,347

Columns: 7 (Date, Vendor, Category, Amount, Invoice #,

Payment Method, Notes)

ISSUES FOUND:

1. DUPLICATES: 89 (34 exact, 55 near-duplicates)

2. DATE FORMAT: 3 incompatible formats in same column

3. MISSING DATA: 14 "see invoice" in Amount, 12 empty dates

4. CASE INCONSISTENCY: "Office Supplies" / "Office supplies"

/ "OFFICE SUPPLIES" treated as different categories

5. OUTLIERS: 3 transactions over $50,000 (may be legitimate)Read the audit. Every item is a decision point. Should dates be MM/DD/YYYY or YYYY-MM-DD? Delete exact duplicates or keep for manual review? Set “see invoice” entries to $0 or flag them? You decide. Claude executes.

How do you clean the data?

After reviewing the audit, send the cleanup prompt with your specific decisions:

Using the audit results, clean test-data.csv. Apply these rules:

1) Standardize all dates to YYYY-MM-DD

2) Remove exact duplicates, mark near-duplicates with "REVIEW"

in Notes column

3) Replace "see invoice" in Amount with 0, add "MISSING AMOUNT"

to Notes

4) Standardize category names to Title Case

5) Flag rows with empty dates: add "MISSING DATE" to Notes

Save cleaned data as test-data-cleaned.csv on my Desktop.

Save a cleaning log as cleaning-log.txt documenting every

change with original value and new value.The cleaning log is the critical piece. It’s your paper trail. If a client asks “why did this row change?” you point to the log. If Claude made a mistake, you undo the specific change.

The log looks like this:

CLEANING LOG - test-data.csv

Processed: 2,347 rows → 2,224 rows after cleanup

Date Standardization: 488 rows updated

Row 14: "15-Mar-25" → "2025-03-15"

Row 27: "03/04/2025" → "2025-03-04" (interpreted as MM/DD/YYYY)

Exact Duplicates Removed: 34 rows

Rows 156-157: Identical (Staples, $47.23, 2025-01-14)

Near-Duplicates Flagged: 55 rows marked "REVIEW"

Rows 201, 203: Same amount ($312.50), Vendor differs

Amount Text Entries: 14 rows set to $0 with "MISSING AMOUNT"When my client’s finance team received this log alongside the cleaned data, they spent zero time questioning the changes. Every modification documented. That’s what gets you repeat business.

How do you generate the analysis report?

Read test-data-cleaned.csv. Generate analysis-report.md on

my Desktop with:

1) Executive Summary (3-4 sentences, no jargon)

2) Key Metrics (total transactions, total amount, average

transaction, largest, most common category/vendor)

3) Trends (monthly spending, top 5 categories by total,

top 5 vendors by total)

4) Anomalies (unusual patterns, flagged items, outliers)

5) Recommendations (3-5 specific actions)

Write in plain English. A business owner with no data

background should understand every sentence.Here’s what the output looks like. This is an actual report Claude generated from the spreadsheet from hell:

ANALYSIS REPORT - Client Transaction Data

EXECUTIVE SUMMARY

2,224 transactions totaling $847,231 across 42 vendors over

8 months. Office Supplies is the largest category at $198K

(23%). Three transactions over $50K flagged for review.

89 duplicates removed during cleaning, saving $12,400 in

potential double-payments.

KEY METRICS

- Total transactions: 2,224 (after removing 123 bad rows)

- Total amount: $847,231.47

- Average transaction: $380.94

- Largest: $67,200 (Vendor: DataFlow Inc, flagged as outlier)

- Most common category: Office Supplies (487 transactions)

- Most common vendor: Staples (156 transactions)

RECOMMENDATIONS

1. Investigate the 3 transactions over $50K — they represent

8% of total spend across just 0.1% of transactions.

2. Consolidate Office Supplies vendors — 12 different vendors

for the same category suggests no preferred supplier deal.

3. Address the 14 "see invoice" entries — $0 placeholders

mask the true spending total.That report would take a data analyst 2-3 hours. Claude produced it in under 4 minutes. My client’s finance team used it in their board presentation. They didn’t know (or care) how it was made.

From Anthropic’s Cowork documentation, these are the official data capabilities:

| What Cowork can do with your data | What it can’t do |

|---|---|

| Statistical analysis: outlier detection, cross-tabulation, time-series | Predictive modeling or regression |

| Data visualization: generate charts from your data | Interactive dashboards |

| Data transformation: clean, transform, process datasets | Real-time streaming data |

| Excel with working formulas, VLOOKUP, conditional formatting | Datasets over ~10,000 rows efficiently |

| Multiple data source merging (bank CSV + invoice spreadsheet) | Direct database connections |

The sweet spot is messy mid-size datasets (50-10,000 rows) that small businesses actually encounter. For 50,000+ rows, use a proper database tool. For under 50 rows, just paste into Claude Chat.

What broke (and the fixes)

Date ambiguity. Is “03/04/2025” March 4th or April 3rd? Claude has to guess unless you tell it. For US data: “Interpret ambiguous dates as MM/DD/YYYY.” For international: “Interpret as DD/MM/YYYY.” Getting this wrong silently corrupts your analysis.

Currency formatting chaos. Some cells have “$1,247.00”, others have “1247”. Add to your prompt: “Strip currency symbols and commas from Amount. Convert all to plain numbers with two decimal places.”

Skipping the audit. I told Claude to “organize my Google Drive” without auditing first. It renamed my tax return “Miscellaneous_Item_47.” The audit step costs 2 minutes and prevents 20-minute undos.

What types of data work beyond transactions?

The same three-step pipeline works on any tabular data. Add these lines to your report prompt depending on your data type:

| Data type | Add to your report prompt | Example result |

|---|---|---|

| Survey results | ”Categorize free-text comments into Praise, Feature Request, Complaint, Neutral. Identify top 3 complaint themes with example quotes.” | Overall satisfaction 3.8/5. Top complaint: “Shipping takes too long” (34 of 200 responses) |

| Sales data | ”Break down by sales rep, product line, deal size, close rate. Show which rep closed the most deals over $10,000.” | Rep A: 12 deals >$10K, highest close rate at 34% |

| Expense reports | ”Flag meals over $50, single transactions over $500, and entries where category doesn’t match description.” | Found 8 miscategorized entries, 3 over policy limit |

| Inventory | ”Calculate total value (qty x unit price). Flag items below reorder threshold of 10. Identify 5 slowest-moving items.” | Total value: $847K. 14 items below reorder. Widget-X hasn’t moved in 90 days. |

A small business owner I know had 200 customer feedback responses sitting in a CSV for two months. The survey analysis found the top complaint wasn’t pricing (his assumption). It was shipping speed (34 of 200 mentions). He adjusted his supplier. Satisfaction went up 0.6 points next quarter.

What should you actually do?

- If you have a messy spreadsheet right now: run the three-step pipeline today. Start with the audit prompt, decide your cleaning rules, then generate the report.

- If you do this monthly: save the prompts as templates and reuse them. Same format, different data file each month.

- If you’re doing this for clients: the audit + cleaning log + report is a package consultants charge $150-400 to produce. Your time with Claude: about 20 minutes of supervision.

- If your data is over 5,000 rows: export a smaller sample first, run the pipeline, verify quality, then run on the full dataset.

bottom_line

- The audit step is non-negotiable. Skip it and Claude makes its own decisions about your data. Those decisions are sometimes wrong and always undocumented. Two minutes of auditing saves hours of fixing.

- The cleaning log separates professional data work from amateur hour. Document every change. Clients trust documented changes. They question undocumented ones.

- This pipeline works on any tabular data: transactions, surveys, sales, expenses, inventory. The prompts change. The three-step pattern doesn’t.

Frequently Asked Questions

How big a spreadsheet can Claude handle?+

Claude works well with up to 5,000-10,000 rows. Beyond that, processing time becomes impractical. For 50,000+ row datasets, use a real database tool.

Can Claude make charts from my spreadsheet data?+

Claude can describe what charts should look like and format chart-ready data, but it can't produce polished bar charts directly. Ask it to create a Google Sheet with formatted data, then use the built-in chart tools.

Does Claude need to see my actual data?+

Yes. If Claude reads the file directly (CSV on your Desktop), the data is processed on Anthropic's servers. For sensitive financial or medical data, review Chapter 11 of the book before automating.

More from this Book

How to Build an Email Triage System with Claude

Build an email triage system with Claude Cowork that turns 127+ unread emails into a one-page summary with draft replies. Copy-paste prompts included.

from: Claude Cowork - Automate Your Job This Weekend

Claude Chat vs Code vs Cowork: Which One to Use

Claude has three products and most people use the wrong one. Here's the decision framework with 10 real-world scenarios, timing data, and the one-sentence rule.

from: Claude Cowork - Automate Your Job This Weekend

How to Set Up Claude Computer Use on Mac

Set up Claude Computer Use on your Mac in 15 minutes flat. The exact permissions, display settings, and troubleshooting fixes most guides skip entirely.

from: Claude Cowork - Automate Your Job This Weekend

How to Schedule Recurring Tasks in Claude Cowork

Set up three daily automated tasks in Claude Cowork: morning email triage, afternoon data check, and end-of-day report. Includes the laptop-closed fix.

from: Claude Cowork - Automate Your Job This Weekend