The 30-Day Claude Code Skills Roadmap: From Zero to 50+ Skills

>This covers the 30-day plan. Claude Code Skills includes every skill type referenced here: stop slop, testing, hooks, MCP, self-improving loops, and the consulting/sales playbook.

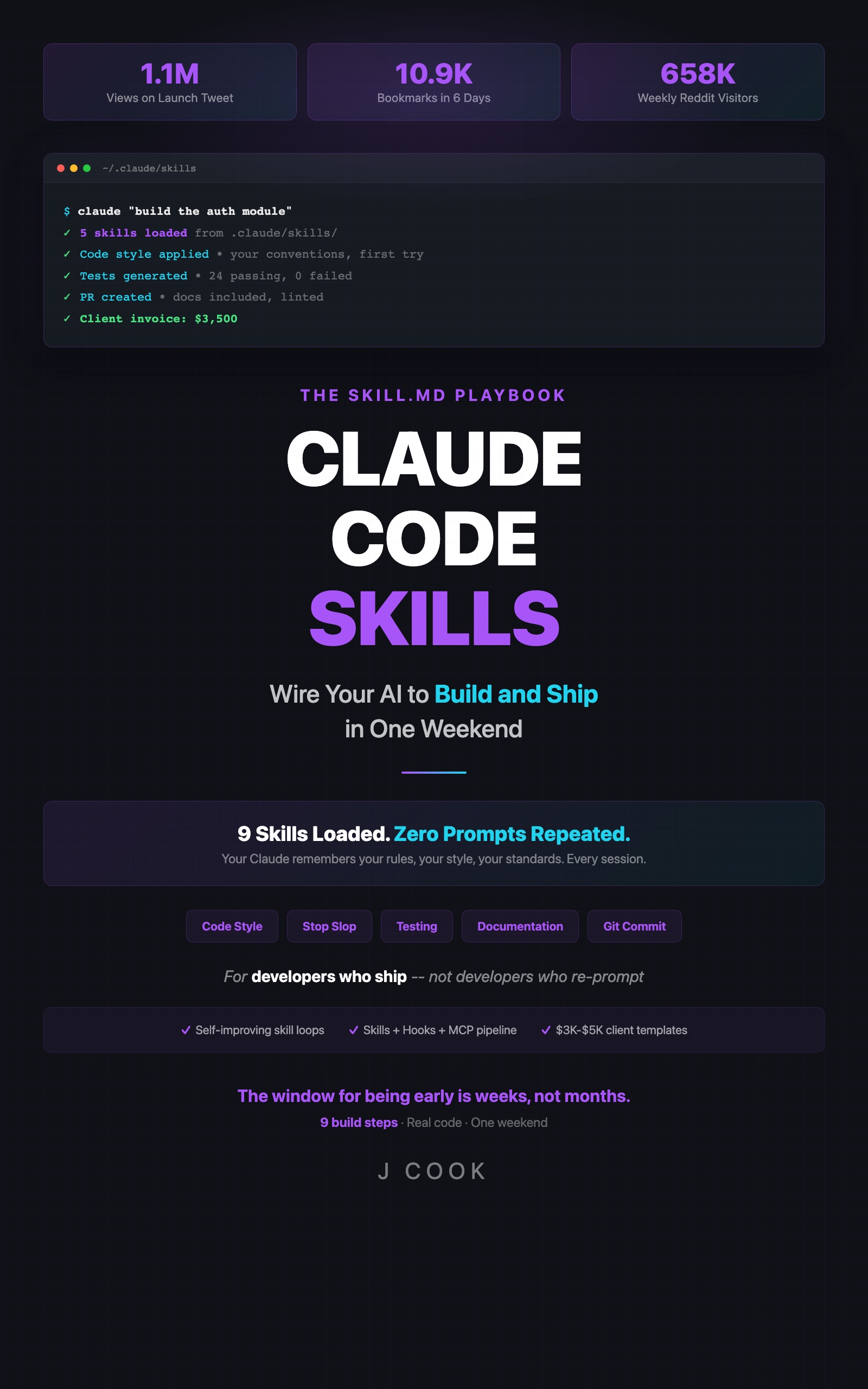

Claude Code Skills

The SKILL.md Playbook — Wire Your AI to Build and Ship in One Weekend

Summary:

- Day 0 baseline: measure your current correction time and skill count.

- Week-by-week targets: 10-12 skills (Week 1), 20+ (Week 2), 30+ (Week 3), 50+ (Week 4).

- Weekly metrics that prove the system is working.

- Post-30 maintenance: 25 minutes/week to keep skills sharp.

- 60-day and 90-day goals for trimming, sharing, and monetizing.

Day 0, you write down three numbers. By Day 30, those numbers will tell you exactly how much your skills system is worth.

This is the plan. Four weeks. Each week builds on the previous one. By the end, you’ll have 50+ skills, automated hooks, and a system that gets better without your involvement.

What do you measure on Day 0?

Three baseline numbers. Write them down before you build anything.

# Day 0 Baseline / [your date]

1. Current skill count: ___

2. Minutes per day correcting Claude: ___

3. Times per week copy-pasting the same instructions: ___Most developers start with 0 skills, 15-30 minutes/day correcting Claude, and 5-10 copy-paste sessions per week. These numbers are your “before.” Everything you build over 30 days gets measured against them.

What do you build in Week 1? (target: 10-12 skills)

Day 1: Audit (30 min)

├── Write down every correction you made to Claude today

├── Group corrections by category (style, testing, docs, naming)

├── Rank by frequency: most repeated correction = first skill

└── Goal: prioritized list of 6-8 skill topics

Days 2-3: Build 4-6 skills (2 hours)

├── Stop Slop (naming, filler, placeholder data)

├── Code Style (imports, exports, file naming)

├── Testing Standards (framework, assertions, edge cases)

├── Documentation (JSDoc, comment rules)

├── Git Commits (conventional format, scoping)

├── Error Handling (patterns, logging, recovery)

└── Test each skill: before/after comparison on same prompt

Day 4: Reorganize (30 min)

├── Create numbered folder structure (01-style, 02-testing, etc.)

├── Move skills into correct folders

├── Grep for conflicts across files

└── Delete or merge duplicates

Days 5-6: First hook (1 hour)

├── Pick the highest-value event (pre-commit is the default choice)

├── Write hook config: event, pattern, action, blocking

├── Test with 3 commits: verify it catches what you expect

└── Adjust pattern scope if it reviews too many files

Day 7: Review and delete (30 min)

├── Re-run Day 1 prompts with all skills active

├── Compare output to Day 1 baseline

├── Delete any skill that didn't fire or didn't help

└── Update Day 0 metrics: correction time should be down 30-40%By end of Week 1: 10-12 skills, one working hook, measurable reduction in correction time.

What changes in Week 2? (target: 20+ skills)

Days 8-9: Project-specific skills

├── Skills for your actual codebase patterns (not generic rules)

├── API conventions, database patterns, deployment config

└── These are the skills that make Claude feel like a team member

Day 10: Memory skill

├── Teach Claude what happened in previous sessions

├── Project decisions, architecture choices, known bugs

└── This bridges the "new session, no context" gap

Days 11-12: Stack-specific skills

├── Framework-specific patterns (Next.js, FastAPI, Rails, etc.)

├── Library conventions (Prisma, tRPC, SQLAlchemy, etc.)

└── One skill per major dependency in your stack

Day 13: Second hook

├── Auto-test on file save (non-blocking)

├── Or doc-sync on commit (blocking)

└── Two hooks cover the two most common automation needs

Day 14: Consolidate

├── Merge overlapping skills

├── Trim any skill over 40 lines

├── Check total word count (stay under 5,000)

└── Update metrics: correction time should be down 50-60%How do you add self-improvement in Week 3? (target: 30+ skills)

Days 15-16: Self-improving code review

├── Add Self-Evaluation section to code review skill

├── Add three exit conditions (session, daily, size limit)

├── Let it run for 2 days before checking results

└── This is the skill that learns from its own gaps

Day 17: Skill generator

├── A skill that helps you write new skills faster

├── Template with trigger, instructions, constraints, examples

├── Cuts new skill creation from 15 min to 5 min

└── Meta-skill: uses Claude to build Claude's instructions

Days 18-19: MCP integration

├── Connect one MCP server (database, API, or filesystem)

├── Write a hook that uses MCP for context-aware reviews

├── Example: migration review against live schema

└── This is the jump from "rules" to "intelligence"

Day 20: Non-code skill

├── Writing voice, weekly reports, email templates

├── Skills work for any structured output, not just code

└── Designers, PMs, and writers benefit from the same system

Day 21: Stress test

├── Run 10 diverse prompts across your skill library

├── Document which skills fire, which don't, which conflict

├── Identify gaps in coverage

└── Update metrics: correction time should be down 70-80%How do you scale to 50+ in Week 4?

Days 22-23: Fill gaps from stress test

├── Every gap identified on Day 21 becomes a skill

├── Focus on the corrections you're STILL making

└── These late-stage skills are the most targeted

Day 24: Quality audit

├── Run full 7-point troubleshooting checklist

├── Check all self-improving skills for runaway growth

├── Verify total word count under 5,000

└── Delete non-performing skills (no hits in 2 weeks = delete)

Days 25-26: Package for reuse

├── Select 15-20 best skills for your primary stack

├── Add README, INSTALL.md, CUSTOMIZE.md, CHANGELOG, LICENSE

├── Test installation from scratch in a fresh project

└── This is your template product if you choose to sell

Day 27: Share publicly

├── Post one skill with before/after in [r/ClaudeCode](https://reddit.com/r/ClaudeCode) or [r/claudeskills](https://reddit.com/r/claudeskills)

├── Share your 30-day metrics (Day 0 vs Day 30)

├── Engage with feedback: real users find real gaps

└── This builds your audience for consulting or sales

Days 28-30: Monetization setup

├── [Gumroad](https://gumroad.com) page for template (if selling)

├── Consulting pricing page (if freelancing)

├── First outreach to potential clients in [r/ClaudeCode](https://reddit.com/r/ClaudeCode) or [r/ClaudeAI](https://reddit.com/r/ClaudeAI)

└── Final metrics: correction time should be down 80%+What metrics prove it’s working?

Track three numbers weekly:

def weekly_metrics(week, skill_count, correction_minutes, skill_hits):

"""Track progress against Day 0 baseline."""

return {

"week": week,

"skills": skill_count,

"correction_min_per_day": correction_minutes,

"skill_hits_per_day": skill_hits

}

# Example progression

baseline = weekly_metrics(0, 0, 25, 0)

week_1 = weekly_metrics(1, 12, 15, 8) # -40% correction time

week_2 = weekly_metrics(2, 22, 10, 14) # -60% correction time

week_3 = weekly_metrics(3, 33, 6, 20) # -76% correction time

week_4 = weekly_metrics(4, 52, 4, 25) # -84% correction time

print(f"Day 0: {baseline['correction_min_per_day']} min/day correcting")

print(f"Day 30: {week_4['correction_min_per_day']} min/day correcting")

print(f"Reduction: {round((1 - week_4['correction_min_per_day']/baseline['correction_min_per_day']) * 100)}%")

# Day 0: 25 min/day correcting

# Day 30: 4 min/day correcting

# Reduction: 84%Skill count goes up. Correction time goes down. If correction time isn’t dropping, your skills are either too vague, conflicting, or targeting the wrong problems.

What happens after Day 30?

The build phase is over. Now it’s maintenance.

Weekly (7 minutes total):

├── 2 min: Update memory skill with session decisions

└── 5 min: Review self-improving skill changes, approve or revert

Monthly (15 minutes):

├── Architecture review: check folder structure, merge overlaps

├── Word count check: stay under 5,000

└── Delete skills with zero hits in 30 days

Quarterly (1-2 hours):

├── Claude update testing: re-run skill verification prompts

├── Stack update testing: new framework versions, new APIs

└── Full stress test with 10 diverse promptsWhat are the 60-day and 90-day goals?

Day 60:

- Trim non-performing skills (if it hasn’t fired in 30 days, delete it)

- Build a second self-improving skill (testing is the natural second choice)

- Start sharing skills publicly (r/ClaudeCode, r/claudeskills, blog post, or open repo)

- First consulting client if you’re pursuing that path

Day 90:

- Correction time 90%+ lower than Day 0

- Active monetization: templates selling or consulting engaged

- Second project or second stack fully covered

- Skills system running with minimal weekly maintenance

What broke

Week 2 is where most people stall. They build 12 skills in Week 1 and then spend Week 2 adding more skills for the same concerns instead of expanding to new domains. I had 8 skills related to code style before I realized I hadn’t built a single testing skill.

The fix: the Day 14 consolidation step is mandatory, not optional. Merge overlapping skills, check the category distribution, and force yourself into under-covered areas. If 60% of your skills are in one folder, you have a coverage problem.

# Coverage distribution check (run on Day 14)

echo "=== Skills per category ==="

for dir in .claude/skills/*/; do

count=$(ls "$dir"*.md 2>/dev/null | wc -l)

echo " $(basename $dir): $count skills"

done

# If any category has 0 skills by Day 14, that's your next targetWhat should you actually do?

- Today —> write down your Day 0 baseline (skill count, correction minutes, copy-paste frequency). You cannot measure improvement without a starting point.

- This week —> complete Week 1. Build 4-6 skills on Days 2-3, reorganize on Day 4, add your first hook on Days 5-6. By Day 7 you will have measurable results.

- If you already have skills —> jump to whatever week matches your current count. 10 skills = start at Week 2. 20 skills = start at Week 3. The roadmap works regardless of starting point.

bottom_line

- The 30-day plan takes you from 0 to 50+ skills with measurable results at every checkpoint. Correction time drops 80%+ by Day 30.

- Three weekly metrics tell you if the system is working: skill count (up), correction time (down), and skill hits (up). If correction time isn’t dropping, your skills need debugging, not more quantity.

- After Day 30, maintenance is 25 minutes per week. The system is self-sustaining with proper exit conditions on self-improving skills and monthly architecture reviews.

Frequently Asked Questions

How many Claude Code skills should I have after 30 days?+

50+ if you follow the full roadmap. The number matters less than the correction time metric: you should be correcting Claude 80%+ less by Day 30 compared to Day 0.

How long does it take to maintain Claude Code skills?+

After the 30-day build phase: 2 minutes/week for memory updates, 5 minutes/week for self-improving skill review, 15 minutes/month for architecture review, 1-2 hours/quarter for Claude update testing.

When should I start monetizing my skills?+

Week 4 of the roadmap. By then you have 40+ working skills, documented results, and enough experience to package templates or start consulting. Don't try to sell what you haven't battle-tested.

More from this Book

How to Sell Claude Code Skills Consulting for $2,200-$5,200 Per Engagement

A developer made $5,200 in two days building skills libraries. Here's the exact process: Day 1 audit, Day 2 delivery, three pricing packages, and the sales math that closes deals.

from: Claude Code Skills

Set Up a Claude Code Pre-Commit Hook That Catches Bugs Before They Ship

Build a pre-commit hook that caught 14 bugs in 2 months for $2-4/month. Includes hook anatomy, auto-test and doc-sync hooks, and MCP pipeline integration.

from: Claude Code Skills

How to Make Claude Code Learn From Its Own Mistakes

Build a self-improving skill that evaluates Claude's output, updates its own rules, and costs $1-4/month. Includes the $40 API burn that taught me exit conditions.

from: Claude Code Skills