How to Make Claude Code Learn From Its Own Mistakes

>This covers self-improving loops. Claude Code Skills includes memory skills, skill generators, hooks automation, and the full system from first skill to 50+ skill library.

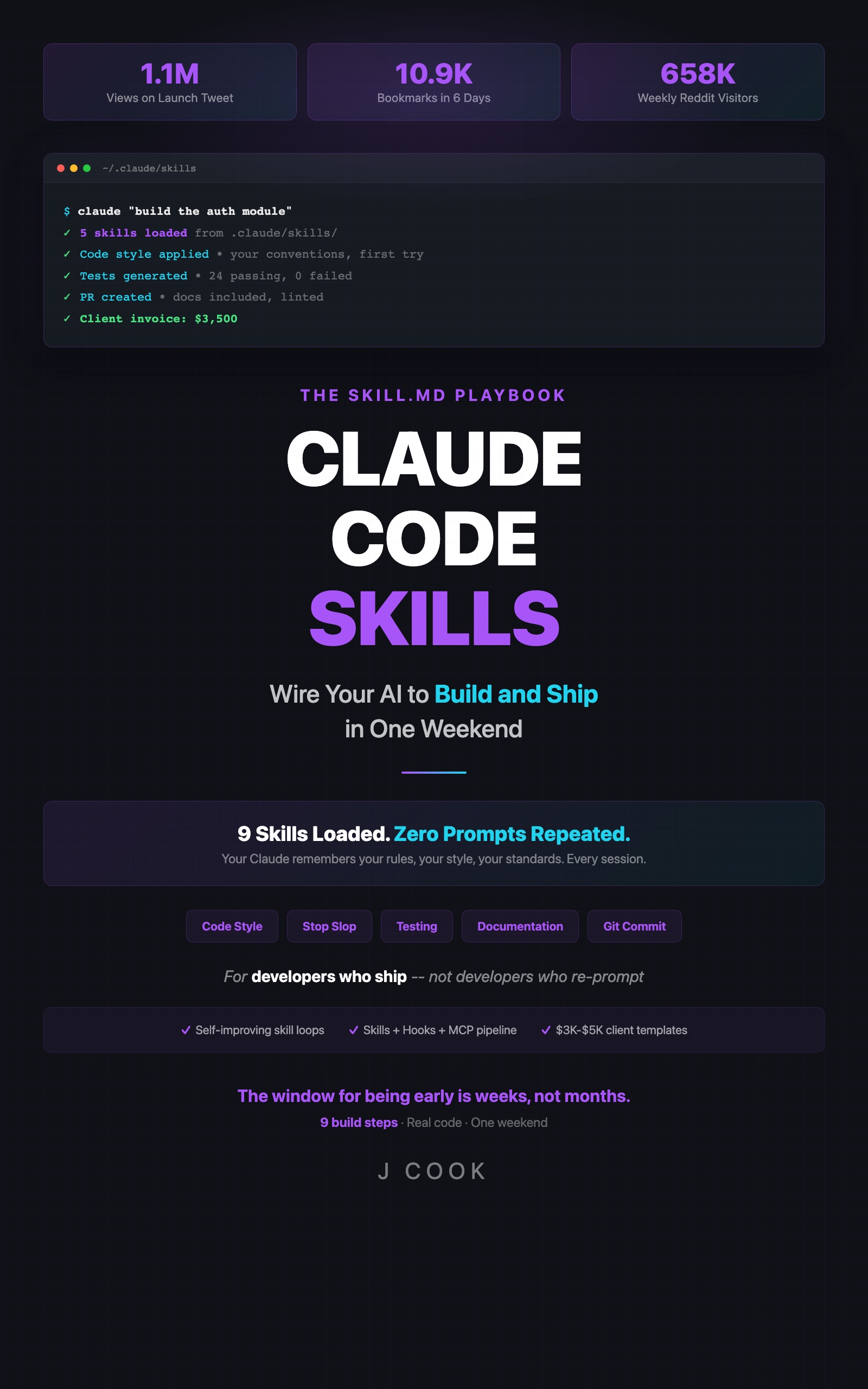

Claude Code Skills

The SKILL.md Playbook — Wire Your AI to Build and Ship in One Weekend

Summary:

- Self-improving skills evaluate Claude’s output and update their own rules automatically.

- The $40 API burn: what happens without exit conditions (800K tokens, 347-line skill, 45 minutes).

- Three mandatory safety rules that keep costs at $1-4/month.

- Copy-paste self-improving code review skill with proper exit conditions.

I built a self-improving skill with no exit condition. It ran for 45 minutes. Grew from 20 lines to 347 lines. Developed contradictory rules. Consumed 800,000 tokens. Cost $40.

Then I added three lines of constraints and the same pattern has been running for two months at $2/month. Here’s the difference.

What is a self-improving skill?

Self-improving skill is a SKILL.md file that includes evaluation criteria and update instructions. After Claude completes a task, it checks its own output against the skill’s rules, identifies gaps, and proposes a rule update.

Normal skill: static rules → same output every time. Self-improving skill: static rules → evaluates output → updates rules → better output next time.

# Code Review (Self-Improving)

## Trigger

When reviewing code changes

## Instructions

- Check for null reference risks in async handlers

- Verify error messages include context (function name, input values)

- Flag missing rate limiting on public endpoints

[rules added by self-evaluation below this line]

## Self-Evaluation

After each code review, evaluate:

1. Did I miss anything the developer later caught?

2. Did I flag something that wasn't actually a problem?

3. Is there a pattern in what I'm missing?

If a new pattern is identified, add it to Instructions.

## Exit Conditions

- Evaluate at most once per session

- Update at most once per day

- Max file size: 60 lines — remove least-useful rule before adding new one

- Skip update if no improvement identifiedWhat does the learning sequence look like?

Real data from my code review skill over 30 days:

| Day | Event | Skill change | Lines |

|---|---|---|---|

| 1 | Missed race condition in async handler | Added “Check for race conditions in async handlers” | 20 → 23 |

| 4 | Caught race condition on next review | No update needed — skill worked | 23 |

| 12 | Missed type coercion bug in comparison | Added “Flag loose equality (==) in conditional checks” | 23 → 26 |

| 19 | No misses for 7 days | No update — exit condition triggered | 26 |

| 26 | Missed missing input validation on API endpoint | Added “Verify input validation on all public route handlers” | 26 → 29 |

Three rules added in 30 days. Six lines of growth. Negligible API cost. The skill actually got better at reviewing code.

The $40 mistake: what happens without exit conditions

# BAD — DO NOT USE THIS

## Self-Evaluation

After every output, evaluate quality and update rules.No frequency limit. No size limit. No skip condition. Here’s what happened:

Minute 0: Skill file: 20 lines, clear rules

Minute 5: Evaluation #1 — added 3 rules (23 lines)

Minute 10: Evaluation #2 — added 4 rules (27 lines)

Minute 15: Evaluation #3 — rules starting to overlap

Minute 25: Evaluation #6 — contradictory rules (use semicolons / don't use semicolons)

Minute 35: Evaluation #9 — 200+ lines, Claude confused by own rules

Minute 45: I noticed my API dashboard. 800,000 tokens. $40.The fix was git checkout HEAD~1 -- .claude/skills/code-review.md and three exit conditions.

The three mandatory exit conditions

Every self-improving skill needs all three. No exceptions.

def should_update_skill(skill_file, last_update, current_session_evals):

"""Check all three exit conditions before allowing a skill update."""

# 1. Frequency limit: once per day max

hours_since_update = (datetime.now() - last_update).total_seconds() / 3600

if hours_since_update < 24:

return {"update": False, "reason": f"Last update {hours_since_update:.0f}h ago, need 24h"}

# 2. Session limit: one evaluation per session

if current_session_evals >= 1:

return {"update": False, "reason": "Already evaluated this session"}

# 3. Size limit: 60 lines max

with open(skill_file) as f:

lines = len(f.readlines())

if lines >= 60:

return {"update": False, "reason": f"Skill at {lines} lines — remove a rule before adding"}

return {"update": True, "reason": "All checks passed"}

# Check before any update

result = should_update_skill(

".claude/skills/code-review.md",

last_update=datetime(2026, 3, 23, 14, 0),

current_session_evals=0

)

print(result)

# {'update': True, 'reason': 'All checks passed'}How do you build one from scratch?

Step by step. Start with an existing skill and add the self-evaluation section.

# Testing Standards (Self-Improving)

## Trigger

When writing or modifying test files

## Instructions

- Use Vitest with describe/it blocks

- Test behavior not implementation

- Include edge cases: empty input, null, boundary values

- Assertions must be specific (toBe, toEqual) not vague (toBeTruthy)

[self-learned rules below]

## Self-Evaluation

After generating tests, check:

1. Did the tests catch a real bug or only test happy paths?

2. Were any tests trivial (testing that true === true)?

3. Did I miss an obvious edge case the developer had to add manually?

If a pattern is identified in misses, add a specific rule to Instructions.

## Exit Conditions

- Evaluate at most once per session

- Update at most once per day

- Max file size: 60 lines

- Remove least-useful rule before adding new one

- Skip update if no clear improvement identifiedAfter a week, this skill might learn:

[self-learned rules below]

- Always test the error path for async functions (learned day 3: missed unhandled rejection)

- Generate boundary tests for any function accepting numbers (learned day 7: missed off-by-one)Two rules. Specific. Useful. And it learned them from actual gaps in its own output.

How much does this cost?

def estimate_monthly_cost(evals_per_week=7, tokens_per_eval=1500):

"""Cost for one self-improving skill per month."""

cost_per_token = 0.000015 # Claude Sonnet input pricing (check current rates at https://docs.anthropic.com/en/docs/about-claude/models)

monthly_evals = evals_per_week * 4

monthly_tokens = monthly_evals * tokens_per_eval

monthly_cost = monthly_tokens * cost_per_token

return {

"evals_per_month": monthly_evals,

"tokens_per_month": f"{monthly_tokens:,}",

"monthly_cost": f"${monthly_cost:.2f}"

}

print(estimate_monthly_cost(evals_per_week=5)) # $1.26/month

print(estimate_monthly_cost(evals_per_week=10)) # $2.52/month

print(estimate_monthly_cost(evals_per_week=20)) # $5.04/month — you're probably over-evaluating$1-4/month for a skill that gets better every week. The $40 burn was from running without exit conditions for 45 minutes. With proper limits, it’s cheaper than a latte. (Verify current token pricing on the Anthropic models page before budgeting.)

What broke (besides the $40)

The second problem was subtler. My self-improving code review skill started adding rules that contradicted each other. “Always use early returns” in one rule, “Keep function flow linear” in another. Claude got confused and produced inconsistent output.

The fix: weekly Friday review. 5 minutes. Read the skill file. Delete any rule that conflicts with another. If two rules compete, keep the more specific one.

# Friday skill review — run this weekly

echo "=== Self-improving skills ==="

for f in .claude/skills/**/*.md; do

lines=$(wc -l < "$f")

if grep -q "Self-Evaluation" "$f"; then

echo "$f: $lines lines (SELF-IMPROVING)"

# Check for potential conflicts

grep "^- " "$f" | sort | head -20

fi

doneWhat should you actually do?

- Start with one skill → take your existing code review skill, add the Self-Evaluation and Exit Conditions sections. Run it for a week.

- After one week → check what it learned. If the new rules are useful, add a second self-improving skill (testing is a good candidate).

- Never skip exit conditions → if you remember one thing:

evaluate once per session, update once per day, max 60 lines. Those three rules are the difference between $2/month and $40/hour.

bottom_line

- Self-improving skills learn from gaps in their own output. Three rules added over 30 days made my code review meaningfully better.

- Exit conditions are non-negotiable. Without them, the skill spirals, costs explode, and rules contradict each other.

- Start with one. Run it for a week. The cost is $1-4/month. The risk (with exit conditions) is zero.

Frequently Asked Questions

How much does a self-improving Claude skill cost to run?+

1,000-2,000 tokens per evaluation, roughly $0.01-$0.02. At 5-10 evaluations per week, that's $1-4/month per skill. Add budget alerts on your Anthropic account.

What happens if a self-improving skill goes wrong?+

It grows uncontrollably and produces contradictory rules. The fix is git revert: git checkout HEAD~1 -- .claude/skills/file.md. Always version control your skills.

Can a Claude skill really update itself?+

Yes. Since March 2026, skills support a feedback mechanism where Claude evaluates its own output against the skill's rules and proposes updates (see the [Claude Code docs](https://docs.anthropic.com/en/docs/claude-code) for the latest on skills). You need proper exit conditions or it spirals.

More from this Book

How to Sell Claude Code Skills Consulting for $2,200-$5,200 Per Engagement

A developer made $5,200 in two days building skills libraries. Here's the exact process: Day 1 audit, Day 2 delivery, three pricing packages, and the sales math that closes deals.

from: Claude Code Skills

Set Up a Claude Code Pre-Commit Hook That Catches Bugs Before They Ship

Build a pre-commit hook that caught 14 bugs in 2 months for $2-4/month. Includes hook anatomy, auto-test and doc-sync hooks, and MCP pipeline integration.

from: Claude Code Skills

Claude Code Skills Not Working? The 7 Failure Modes and How to Fix Each One

Not loading, conflicts, vague instructions, context overflow, loop runaway, hook mismatch, Claude updates. Every failure mode with exact diagnosis steps and fixes.

from: Claude Code Skills