How to Sell Claude Code Skills Consulting for $2,200-$5,200 Per Engagement

>This covers the consulting model. Claude Code Skills includes the complete skills system, self-improving patterns, and template marketplace strategies for passive income.

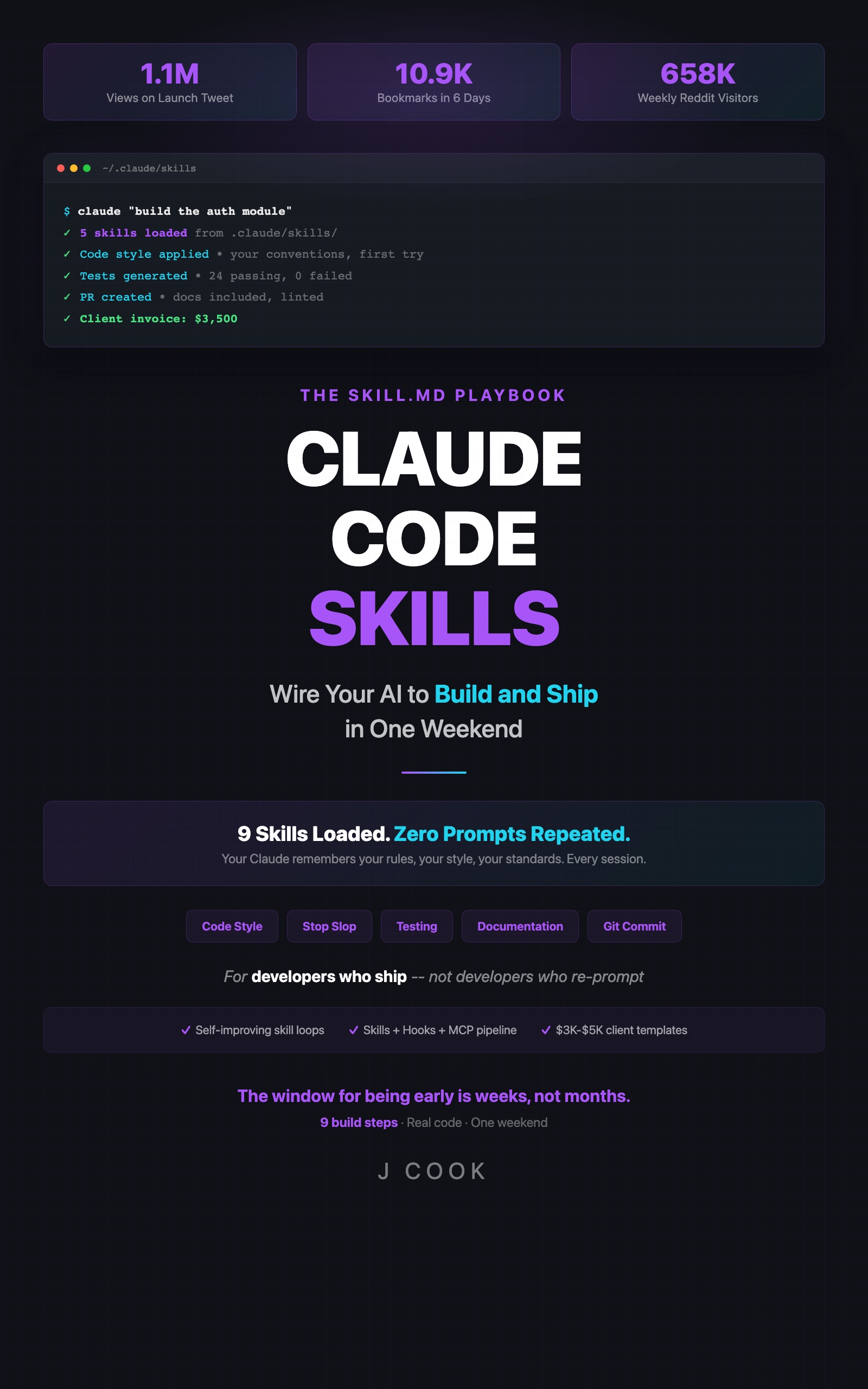

Claude Code Skills

The SKILL.md Playbook — Wire Your AI to Build and Ship in One Weekend

Summary:

- Real engagement: $2,200 for 10 hours with a 3-person Next.js startup.

- The two-day delivery process: audit, build, verify, walkthrough.

- Three pricing packages from $1,500 to $5,000+ per engagement.

- Sales math that makes the ROI obvious to any engineering manager.

- First 30 days from zero to paying clients.

Developer Jake made $5,200 for two days of work building a skills library. Not a SaaS product. Not a course. Markdown files in .claude/skills/. (See the Claude Code documentation for how skills directories work.)

Here’s a real engagement I can break down completely: $2,200 for 10 hours with a 3-person Next.js startup.

What does a skills consulting engagement look like?

Two days. Four phases. The client goes from “Claude keeps using the wrong framework” to “Claude knows our entire stack.”

Day 1 AM: The Audit. I tested their unconfigured Claude Code instance by asking it to generate three typical components. Claude used Express instead of App Router. Jest instead of Vitest. Default imports everywhere. No consistency with their existing codebase patterns. I documented every mistake with screenshots.

Day 1 PM: The Build. Built 12 skills covering their stack: App Router conventions, Vitest testing, their component library patterns, their API structure, their deployment pipeline, and their code review standards. Each skill file written, tested against the same prompts from the morning, and verified.

Day 2 AM: Verification. Ran the exact same requests from Day 1 AM. Claude now used App Router, Vitest, their component patterns, and their naming conventions. Side-by-side screenshots of every before/after.

Day 2 PM: Walkthrough. 55-minute session with the team. Showed every before/after screenshot. Explained how each skill file works. Taught them how to add new skills and update existing ones. Handed over the full library with documentation.

# Engagement Deliverables Checklist

## Day 1

- [ ] Baseline audit: 3 unconfigured prompts, screenshot each output

- [ ] Document every deviation from team standards

- [ ] Build 10-15 skills covering stack, testing, style, review

- [ ] Test each skill against original prompts

## Day 2

- [ ] Re-run baseline prompts with skills active

- [ ] Create before/after comparison document

- [ ] 55-min walkthrough with team (screen share + recording)

- [ ] Deliver: skills library, comparison doc, maintenance guideTotal time: 10 hours. Total payment: $2,200.

How do you price this?

Three packages. The middle one is where most clients land.

Package Price Scope Time

────────────────────────────────────────────────────────────────

Starter $1,500-$2,500 10-15 skills, 1-2 days 8-15 hrs

Full Stack $3,000-$5,000 Skills + hooks + MCP 2-4 days 16-30 hrs

Ongoing $1,000-$2,000 Monthly audit + updates 4-8 hrs/mo

────────────────────────────────────────────────────────────────The Starter package covers teams that want their coding standards encoded. The Full Stack package adds automated hooks (pre-commit review, doc sync, auto-testing) and MCP server integration. The Ongoing package is for teams that want you to maintain and evolve their skills library as their codebase grows.

Jake’s $5,200 engagement was a Full Stack package: 2 days, skills plus hooks, with a team that had complex deployment requirements.

How do you close the sale?

The math does the selling. Walk any engineering manager through this:

def calculate_wasted_hours(team_size, minutes_correcting_per_day, hourly_rate):

"""ROI calculation that closes deals."""

daily_waste = team_size * minutes_correcting_per_day / 60 # hours

annual_waste = daily_waste * 250 # working days

annual_cost = annual_waste * hourly_rate

return {

"daily_hours_wasted": round(daily_waste, 1),

"annual_hours_wasted": round(annual_waste),

"annual_cost_wasted": f"${annual_cost:,.0f}",

"breakeven_days": round(2500 / (daily_waste * hourly_rate))

}

# Typical 10-person team

result = calculate_wasted_hours(

team_size=10,

minutes_correcting_per_day=20,

hourly_rate=55

)

print(result)

# {

# 'daily_hours_wasted': 3.3,

# 'annual_hours_wasted': 833,

# 'annual_cost_wasted': '$91,667',

# 'breakeven_days': 14

# }10 developers correcting Claude for 20 minutes per day at $55/hour = $91,667/year wasted. A $2,500 skills setup pays for itself in 14 days. The ROI is so obvious it barely needs explaining.

How do you handle objections?

Two objections come up in every sales conversation.

“We can do this ourselves.” The answer: “You could have 3 weeks ago. Your team has had Claude Code since launch. The skills directory is still empty. I’m selling 10 hours of focused work that your developers will keep deprioritizing because it’s not a product feature.”

“It’s just markdown files.” The answer: “A lawyer charges $500/hour for a Word document. An architect charges $200/hour for a PDF. The value isn’t the file format. It’s knowing exactly what goes in the file.”

Offer a 50% refund guarantee. If the before/after screenshots don’t show measurable improvement, refund half. You will never pay this out because the improvement is always visible. Unconfigured Claude vs. skill-configured Claude is a night-and-day difference in every engagement.

What’s the first 30 days to getting clients?

Week 1: Portfolio

├── Build your own skills library for your primary stack

├── Document before/after results with screenshots

├── Create a case study page (even from your own project)

└── Record a 2-minute demo video

Week 2: Communities

├── Post skills tips in [r/ClaudeCode](https://reddit.com/r/ClaudeCode) (helpful, not salesy — 658K weekly visitors)

├── Answer "Claude keeps doing X wrong" posts with skill solutions

├── Share one skill file publicly with before/after in [r/ClaudeAI](https://reddit.com/r/ClaudeAI)

└── Join Claude/AI dev Discord servers

Week 3: Content

├── Write one article about skills (your own blog or dev.to)

├── Tweet your before/after comparisons

├── Comment on AI coding threads with specific results

└── Publish your pricing page

Week 4: Outreach

├── DM 10 startup CTOs who post about AI coding frustrations

├── Offer one free 30-minute audit to generate a testimonial

├── Follow up with warm leads from community engagement

└── Close first paid engagementWhat broke

First engagement, I spent 8 hours building 20 skills without testing them incrementally. Three of the skills conflicted with each other. The code review skill mentioned Jest, the testing skill specified Vitest, and the style skill referenced conventions that didn’t match the team’s actual patterns.

Fix: build 3-4 skills, test them, then build the next batch. Never deliver untested skills. The verification step on Day 2 AM exists because of this exact failure.

# Conflict check — run before every delivery

for skill in .claude/skills/**/*.md; do

echo "=== $skill ==="

grep -n "jest\|Jest\|mocha\|Mocha" "$skill" 2>/dev/null

grep -n "semicolons\|no-semicolons" "$skill" 2>/dev/null

done

# Any hits across multiple files = conflict to resolveWhat should you actually do?

- If you have a working skills library for one stack —> write up the before/after results, create a simple pricing page, and post it in one community this week. Your first client will come from someone who saw your results and wants the same thing.

- If you don’t have a skills library yet —> build one for your own project first. You cannot sell what you haven’t built. Take a week, document everything, then start the 30-day client acquisition plan.

- If you want recurring revenue —> the Ongoing package at $1,000-$2,000/month is where the real money is. Monthly audits take 4-8 hours and clients stay for 6+ months because their codebase keeps evolving. r/ClaudeCode and r/ClaudeAI are the two best communities for finding these clients organically.

bottom_line

- A real engagement pays $2,200-$5,200 for 1-4 days of work. The deliverable is markdown files. The value is the expertise encoded in them.

- The sales math closes itself: 10 developers wasting 20 minutes/day at $55/hour = $91,667/year. A $2,500 engagement pays for itself in 2 weeks.

- Start by building your own skills library and documenting the results. You cannot sell expertise you haven’t demonstrated.

Frequently Asked Questions

How much can I charge for Claude Code skills consulting?+

Three packages: Starter at $1,500-$2,500 for 10-15 skills in 1-2 days, Full Stack at $3,000-$5,000 for hooks and MCP integration in 2-4 days, and Ongoing at $1,000-$2,000/month for monthly audits.

What if the client says they can build skills themselves?+

They can. They could have 3 weeks ago, and they didn't. You're selling speed and expertise, not access to a secret. A lawyer charges $500/hour for a Word document.

Do I need to be an expert before consulting?+

You need a working skills library for at least one stack. Build your own first, document the results, then sell the process. Your portfolio is your proof.

More from this Book

Set Up a Claude Code Pre-Commit Hook That Catches Bugs Before They Ship

Build a pre-commit hook that caught 14 bugs in 2 months for $2-4/month. Includes hook anatomy, auto-test and doc-sync hooks, and MCP pipeline integration.

from: Claude Code Skills

How to Make Claude Code Learn From Its Own Mistakes

Build a self-improving skill that evaluates Claude's output, updates its own rules, and costs $1-4/month. Includes the $40 API burn that taught me exit conditions.

from: Claude Code Skills

Claude Code Skills Not Working? The 7 Failure Modes and How to Fix Each One

Not loading, conflicts, vague instructions, context overflow, loop runaway, hook mismatch, Claude updates. Every failure mode with exact diagnosis steps and fixes.

from: Claude Code Skills