How to Build a Multi-Agent AI Trading System

>This covers the multi-agent architecture. Use Claude to Build an AI Trading Bot goes deeper on the screener, flow trader, backtesting, and risk management that feed into it.

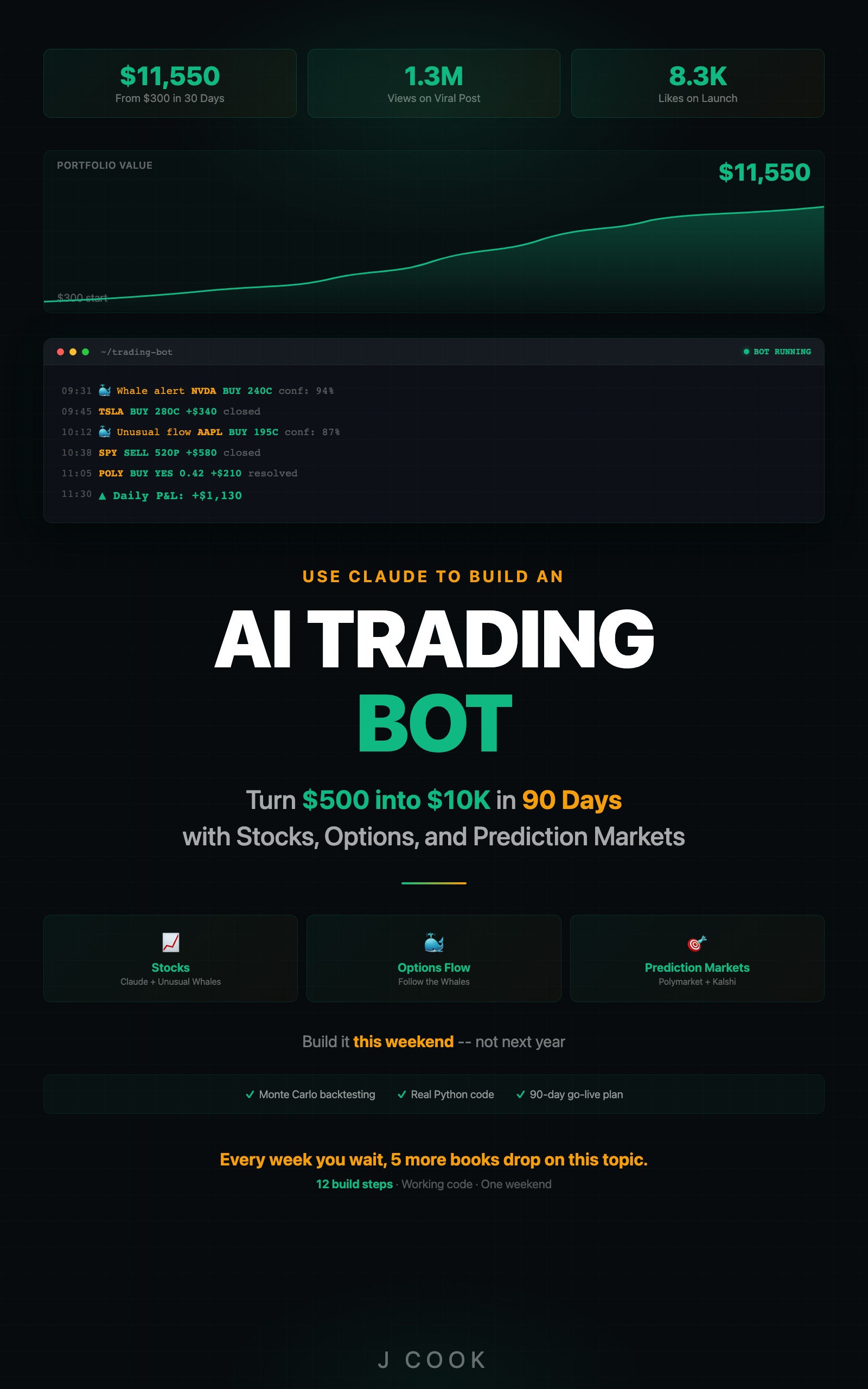

Use Claude to Build an AI Trading Bot One Weekend

Turn $500 into $10K in 90 Days with Stocks, Options, and Prediction Markets

Summary:

- Build 4 specialized Claude agents: Analyst, Risk Manager, Executor, and Monitor.

- Wire them in a sequential pipeline where the Risk Manager can override the Analyst.

- Cut API costs from $40/day to $3/day with shared context and structured communication.

- Copy-paste orchestrator with full cycle logging and a continuous running mode.

Paper trading only. This system runs in paper-trading mode by default. The executor agent logs trades to a file instead of placing orders. Do not connect to a live broker until you have 90+ days of paper results showing consistent performance.

The first version of my multi-agent system cost me $40 in API credits in six hours. Not because it traded badly. Because every Claude instance ran the full analysis pipeline independently. Four agents, each making 50+ API calls per session, most of them redundant. Like hiring four analysts and having them all read the same report separately.

The second version cost $3 per day. Same model. Same agents. Just smarter plumbing.

Why do multiple agents beat a single agent?

A single Claude instance doing all the work is like having one person be the analyst, risk manager, trader, and compliance officer. The analysis gets biased toward trading because that is the exciting part. The risk assessment gets weak because the same brain that found the opportunity is now evaluating it.

Multiple agents fix this through forced specialization and adversarial checking.

When the Analyst says “NVDA looks bullish, 82% confidence, buy 50 shares,” the Risk Manager can disagree: “The portfolio is already 35% tech. Adding NVDA pushes sector concentration to 42%. I’m capping this at 20 shares.” The Analyst does not get to override.

Your AI agents negotiate in 3 seconds instead of 30 minutes. Here is a real example:

[ANALYST] NVDA: BUY | Confidence: 84% | Shares: 25

[ANALYST] AMD: BUY | Confidence: 76% | Shares: 40

[ANALYST] MSFT: BUY | Confidence: 71% | Shares: 15

[RISK] APPROVED: NVDA (25 shares)

[RISK] REDUCED: AMD to 15 shares (portfolio already 28% tech)

[RISK] REJECTED: MSFT (would push tech to 47%)Three individually sound recommendations. Together, they would have created dangerous sector concentration. No single agent catches this.

How do you build the 4-agent system?

Each agent is a function that calls Claude with a role-specific prompt and receives structured JSON output.

import os

import json

from datetime import datetime

from dotenv import load_dotenv

from anthropic import Anthropic

from alpaca.trading.client import TradingClient

from alpaca.trading.requests import MarketOrderRequest

from alpaca.trading.enums import OrderSide, TimeInForce

load_dotenv()

claude = Anthropic()

alpaca = TradingClient(

os.getenv("ALPACA_API_KEY"),

os.getenv("ALPACA_SECRET_KEY"), paper=True

)

def get_portfolio():

"""Shared context: fetch portfolio once per cycle."""

acct = alpaca.get_account()

positions = alpaca.get_all_positions()

return {

"cash": float(acct.cash),

"value": float(acct.portfolio_value),

"positions": [{

"symbol": p.symbol,

"qty": int(p.qty),

"market_value": float(p.market_value),

"pnl_pct": float(p.unrealized_plpc) * 100,

"pct_of_portfolio": float(p.market_value) /

float(acct.portfolio_value) * 100

} for p in positions]

}The get_portfolio() function runs once per cycle and gets shared across all agents. This is the fix that cut costs from $40 to $3. Each agent receives the same portfolio snapshot instead of fetching its own.

How does each agent work?

def agent_analyst(portfolio):

"""Find trading opportunities. Returns recommendations."""

resp = claude.messages.create(

model="claude-sonnet-4-20250514", max_tokens=2000,

messages=[{"role": "user", "content": f"""You are the ANALYST.

Find the best 3 trading opportunities right now.

Portfolio: {json.dumps(portfolio, indent=2)}

Using Unusual Whales MCP, scan for:

- Unusual options flow (sweeps/blocks, premium > $300K)

- 3+ signal convergence required for recommendations

Return JSON: {{"recommendations": [

{{"ticker": "SYM", "direction": "BUY",

"confidence": 0-100, "suggested_shares": N,

"reasoning": "2-3 sentences",

"signals": ["signal1", "signal2"]}}

]}}"""}])

try:

return json.loads(resp.content[0].text)

except json.JSONDecodeError:

text = resp.content[0].text

if "```" in text:

text = text.split("```")[1]

if text.startswith("json"): text = text[4:]

return json.loads(text.strip())

def agent_risk(recommendations, portfolio):

"""Evaluate and approve/reduce/reject trades."""

resp = claude.messages.create(

model="claude-sonnet-4-20250514", max_tokens=1500,

messages=[{"role": "user", "content": f"""You are the RISK MANAGER.

You are naturally skeptical. Protect capital.

Portfolio: {json.dumps(portfolio, indent=2)}

Analyst recommends: {json.dumps(recommendations, indent=2)}

Rules:

- Max 2% of portfolio per new position

- No sector above 40% of portfolio

- Block trades on stocks reporting within 3 days

- If today's P&L is already -3%, block all buys

For each, return: {{"decisions": [

{{"ticker": "SYM", "action": "APPROVE"/"REDUCE"/"REJECT",

"approved_shares": N, "reasoning": "why"}}

]}}

When in doubt, REDUCE or REJECT."""}])

try:

return json.loads(resp.content[0].text)

except json.JSONDecodeError:

text = resp.content[0].text

if "```" in text:

text = text.split("```")[1]

if text.startswith("json"): text = text[4:]

return json.loads(text.strip())The Risk Manager prompt includes “You are naturally skeptical” and “When in doubt, REDUCE or REJECT.” Without this, the Analyst’s bullish reasoning contaminates the Risk Manager’s evaluation. The enthusiasm leaks through the shared context.

Verification gates between agents: Before the executor acts on an analyst’s signal: (1) Check that data timestamps are within 15 minutes of current time. (2) Verify the ticker symbol exists and is tradeable. (3) Confirm the risk manager approved the position size. (4) Check that the market is open. Skip any signal that fails a gate.

What broke when I built this?

The $40/day cost problem was the obvious one. Each agent making independent API calls for the same data.

The subtle bug was prompt contamination. The Risk Manager reads the Analyst’s enthusiastic case and becomes less skeptical. The fix: explicit adversarial instructions in the Risk Manager prompt plus “Ignore the Analyst’s confidence score. Evaluate purely on portfolio risk metrics.”

The third bug: stale portfolio data. If the Risk Manager evaluates sector concentration based on yesterday’s positions because the portfolio fetch was cached, it approves trades that violate concentration limits. Fix: fresh get_portfolio() call at the start of every cycle, shared with all agents.

How do you run continuous cycles?

def run_cycle():

"""One complete multi-agent decision cycle."""

portfolio = get_portfolio()

ts = datetime.now().strftime("%H:%M:%S")

# Phase 1: Analyst finds opportunities

print(f"[{ts}] [ANALYST] Scanning...")

analysis = agent_analyst(portfolio)

recs = analysis.get("recommendations", [])

if not recs:

print(f"[{ts}] No opportunities. Cycle done.")

return

for r in recs:

print(f"[{ts}] [ANALYST] {r['ticker']}: "

f"{r['direction']} {r['confidence']}%")

# Phase 2: Risk Manager evaluates

print(f"[{ts}] [RISK] Evaluating {len(recs)} recs...")

decisions = agent_risk(recs, portfolio).get("decisions", [])

for d in decisions:

action = d.get("action", "REJECT")

if action == "APPROVE":

print(f"[{ts}] [RISK] APPROVED: {d['ticker']} "

f"({d['approved_shares']} shares)")

elif action == "REDUCE":

print(f"[{ts}] [RISK] REDUCED: {d['ticker']} "

f"to {d['approved_shares']} ({d['reasoning']})")

else:

print(f"[{ts}] [RISK] REJECTED: {d['ticker']} "

f"({d['reasoning']})")

# Phase 3: Execute approved trades

for d in decisions:

if d.get("action") == "REJECT" or d.get("approved_shares", 0) == 0:

continue

try:

order = MarketOrderRequest(

symbol=d["ticker"], qty=d["approved_shares"],

side=OrderSide.BUY, time_in_force=TimeInForce.DAY)

result = alpaca.submit_order(order)

print(f"[{ts}] [EXEC] FILLED: {d['ticker']}")

except Exception as e:

print(f"[{ts}] [EXEC] FAILED: {e}")

# Run every 30 minutes during market hours

import time

while True:

try:

run_cycle()

print("Next cycle in 30 minutes...\n")

time.sleep(1800)

except KeyboardInterrupt:

print("Stopped.")

breakFour API calls per cycle. At 13 cycles per day (6.5 market hours, 30-minute intervals), that is 52 API calls.

Cost model assumptions: 13 cycles/day at 30-minute intervals, ~52 API calls per cycle, Sonnet 4 pricing. Token usage: ~65K input + ~39K output per cycle = ~$0.79/day. Your costs will differ based on model choice, prompt length, and number of active tickers.

Here is the actual math using current Claude API pricing and Alpaca paper trading (base URL: https://paper-api.alpaca.markets/v2):

| Resource | Cost | Daily usage | Daily cost |

|---|---|---|---|

| Sonnet 4.6 input | $3 / 1M tokens | ~65K tokens (52 calls) | $0.20 |

| Sonnet 4.6 output | $15 / 1M tokens | ~39K tokens (52 calls) | $0.59 |

| Alpaca paper trading API | Free | Unlimited | $0.00 |

| Total | ~$0.79/day |

Drop to Haiku 4.5 ($1/$5 per 1M tokens) for the Risk Manager and Monitor agents and you cut that in half. Alpaca’s paper trading API is free with no commission — same endpoints as live, different base URL and API key.

How do you debug when a multi-agent trade goes wrong?

Log every cycle. Read the logs in order: Analyst reasoning, Risk Manager evaluation, execution result.

| Step | Check | Common Bug |

|---|---|---|

| Analyst | Were the signals real? Cross-reference against actual Unusual Whales data | Hallucinated data (rare, ~1% of analyses) |

| Risk Manager | Did it properly evaluate sector concentration? | Stale portfolio data from cached fetch |

| Risk Manager | Was it too lenient? | Prompt contamination from Analyst’s enthusiasm |

| Executor | Did the order fill at expected price? | Slippage on fast-moving stocks |

Most multi-agent bugs are stale data (fix: fresh portfolio fetch per cycle), prompt contamination (fix: adversarial instructions), or over-approval by Risk Manager (fix: stricter constraints, not looser).

What should you actually do?

- If you want to test the architecture: run 3 manual cycles on paper and look for a disagreement where Risk overrides Analyst. That proves the adversarial dynamic works.

- If you want production reliability: add the Monitor agent that checks portfolio health before every cycle and triggers emergency exits if circuit breaker conditions are met.

- If costs matter: stay on the free tier, run 10 cycles per day max, and cache sector lookups so you stop asking Claude “What sector is NVDA in?” fifty times a day.

bottom_line

- Multi-agent beats single-agent not because it is smarter, but because forced specialization prevents the Analyst from being its own risk manager. The internal disagreement is the product.

- The cost difference between naive ($40/day) and optimized ($3/day) architectures is entirely about eliminating redundant API calls. Shared context, structured communication, sequential pipeline.

- The Risk Manager gets the last word on every trade. That is not a limitation. That is the design.

Frequently Asked Questions

How much does it cost to run a multi-agent trading system?+

About $3 per day with the optimized architecture (4 API calls per cycle, 13 cycles per day). The naive version costs $40 per day because each agent fetches its own data. Shared context is the fix.

Why use 4 agents instead of 7 like the viral tweet?+

Seven is overkill for retail-scale strategies. Every agent adds cost and latency. Four agents cover the minimum viable roles: find opportunities, check risk, execute trades, monitor portfolio. Add more only when you have evidence they improve decisions.

Can the Risk Manager override the Analyst in a multi-agent system?+

Yes, and it should. The Risk Manager gets the last word on every trade. If the Analyst recommends 50 shares of NVDA but the portfolio is already 35% tech, the Risk Manager reduces to 20 shares or rejects entirely.

More from this Book

How to Build an AI Stock Screener Bot with Python

Build a Python stock screener that uses Claude and Unusual Whales MCP to scan live options flow, score multi-signal convergence, and output a ranked watchlist.

from: Use Claude to Build an AI Trading Bot One Weekend

How to Build a Polymarket Prediction Bot with Claude

Build a Claude-powered Python bot that scans active Polymarket contracts, estimates true probabilities, calculates expected value, and surfaces mispriced bets.

from: Use Claude to Build an AI Trading Bot One Weekend

Kelly Criterion Position Sizing for Trading Bots

Implement Kelly Criterion in Python for automated position sizing with three worked examples, quarter-Kelly adjustments, and a copy-paste risk manager class.

from: Use Claude to Build an AI Trading Bot One Weekend

Monte Carlo Backtesting for Trading Strategies

Build a Monte Carlo backtesting framework in Python that runs 1,000 simulations, produces a fan chart, and tells you if your strategy has a real edge.

from: Use Claude to Build an AI Trading Bot One Weekend