>This covers building a 3-agent pipeline. Build AI Agents goes deeper on memory, deployment, voice agents, and selling multi-agent systems to clients.

Build AI Agents

Ship Your First Agent This Weekend and Start Landing $3,000/Month Clients

Summary:

- A single agent doing research, writing, and review produces first-draft quality. Three specialized agents produce second-draft quality, every time.

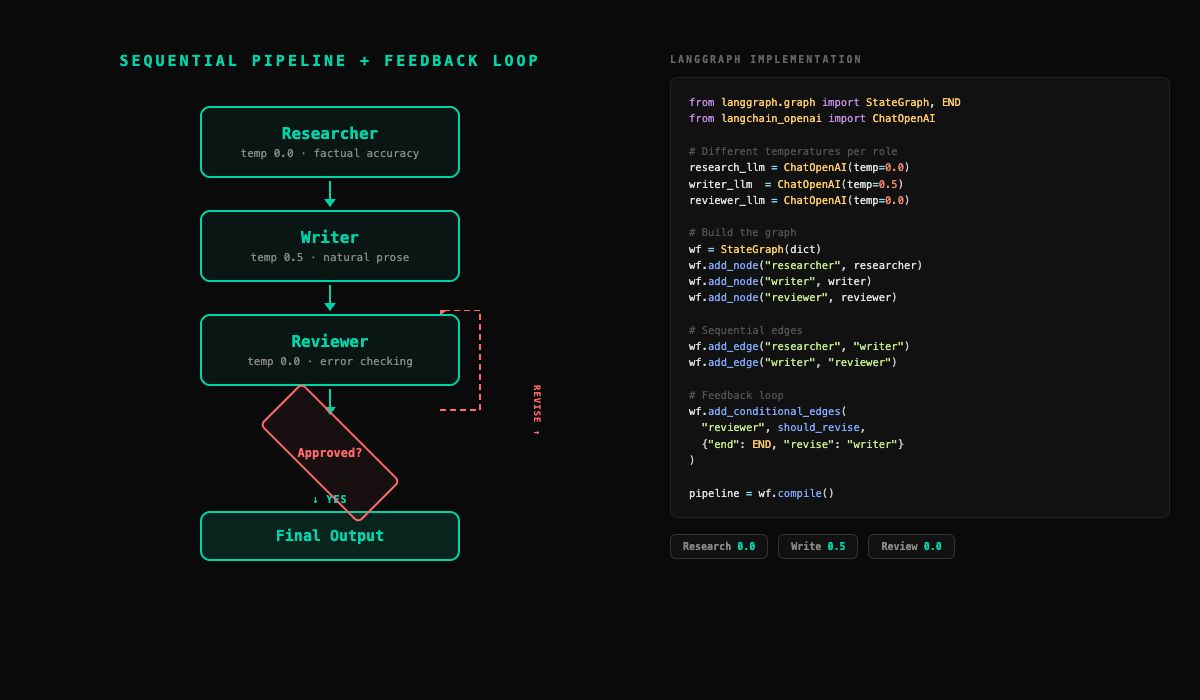

- Three orchestration patterns exist (sequential, parallel, hierarchical). Sequential is what you build first.

- Full LangGraph and CrewAI implementations below, with different temperatures per agent role.

- The feedback loop (reviewer sends draft back to writer) is where the real quality jump happens.

The first version of my content pipeline was one agent doing everything. Research, write, review, format. One prompt, one loop. The output was flat. Not wrong, just generic. Every report read like a slightly different version of the same template.

I split it into three agents with specialized roles: a researcher that only gathers information, a writer that only produces prose, a reviewer that only checks accuracy. Same LLM, same API costs. Single-agent output reads like a first draft. Multi-agent output reads like a second draft. And second-draft quality is what clients pay for.

Why does multi-agent produce better output?

Four reasons, all grounded in how LLMs actually work.

Focused context. A single agent carrying research, writing, and review instructions in one system prompt has a long, cluttered context. The model has more instruction to interpret and more chance of getting confused about what it’s currently supposed to be doing. A specialized researcher has a short, focused prompt: “Find thorough information on this topic. Return organized data with sources.” Short prompts produce more consistent behavior.

Role clarity. Tell one agent to “research, then write, then review your own work” and the review step is weak. The agent just wrote the content. Reviewing your own work is hard for humans and equally hard for LLMs. A separate reviewer reads the draft cold, the way an editor would, and catches things the writer missed.

Iterative improvement. The reviewer sends feedback to the writer. The writer revises. The reviewer checks again. This back-and-forth produces output polished through iteration, not generated in a single pass. Single-agent workflows generate once and stop.

Different temperatures. You can run the researcher at temperature 0.0 for factual accuracy, the writer at 0.5 for natural prose, and the reviewer at 0.0 because consistency matters more than creativity when checking facts. A single agent runs at one temperature for all three jobs.

The trade-off is complexity. For simple tasks (classification, data extraction), single-agent is cheaper and faster. For tasks that benefit from specialization and iteration, multi-agent is worth every line of extra code.

What are the three orchestration patterns?

Sequential (pipeline). Agent A finishes, passes the result to Agent B, who passes it to Agent C. Like an assembly line.

Researcher -> Writer -> Reviewer -> Final OutputUse it when the task has clear, ordered stages with different specialties. Content pipelines, data processing, document analysis. Easiest to build and debug.

Parallel. Multiple agents work simultaneously, results merged. Use when subtasks are independent (multi-source research, multi-language). The merger step is the hard part.

Hierarchical. A manager decomposes the task, assigns to workers, collects results. Most powerful, most complex. The manager is a single point of failure.

For your first multi-agent project, sequential is the right call. Everything below uses sequential.

How do you build a 3-agent pipeline?

LangGraph version

Three agents, three temperatures, wired into a state graph:

from langgraph.graph import StateGraph, END

from langchain_openai import ChatOpenAI

# Three LLMs with different temperatures

research_llm = ChatOpenAI(model="gpt-4o", temperature=0.0)

writer_llm = ChatOpenAI(model="gpt-4o", temperature=0.5)

reviewer_llm = ChatOpenAI(model="gpt-4o", temperature=0.0)

def researcher(state):

"""Gather information on the topic."""

topic = state["topic"]

research = research_llm.invoke(

f"Research this topic thoroughly. Return "

f"organized findings with sources: {topic}"

)

return {"research": research.content}

def writer(state):

"""Write a polished report from the research."""

research = state["research"]

feedback = state.get("review", "")

prompt = (

f"Write a well-structured, engaging report "

f"based on this research:\n\n{research}"

)

if feedback:

prompt += f"\n\nRevision feedback:\n{feedback}"

draft = writer_llm.invoke(prompt)

return {"draft": draft.content}

def reviewer(state):

"""Review the draft for accuracy and quality."""

draft = state["draft"]

research = state["research"]

review = reviewer_llm.invoke(

f"Review this draft against the original "

f"research. Flag any inaccuracies, weak "

f"sections, or missing information. If the "

f"draft is good, say APPROVED.\n\n"

f"Draft:\n{draft}\n\nResearch:\n{research}"

)

return {"review": review.content}

def should_revise(state):

"""Check if revision is needed."""

review = state.get("review", "")

revisions = state.get("revision_count", 0)

if revisions >= 3 or "APPROVED" in review:

return "end"

return "revise"

# Build the graph (includes feedback loop)

workflow = StateGraph(dict)

workflow.add_node("researcher", researcher)

workflow.add_node("writer", writer)

workflow.add_node("reviewer", reviewer)

workflow.add_edge("researcher", "writer")

workflow.add_edge("writer", "reviewer")

# Reviewer loops back to writer if not approved

workflow.add_conditional_edges(

"reviewer", should_revise,

{"end": END, "revise": "writer"}

)

workflow.set_entry_point("researcher")

pipeline = workflow.compile()Run it:

result = pipeline.invoke({"topic": "AI agent business models"})

print(result["review"])CrewAI version

CrewAI’s API reads more like English. You describe who each agent is, what their job is, and the framework handles the wiring. One thing worth noting: CrewAI is now a standalone framework, built from scratch and independent of LangChain. Its current architecture uses a decorator-based @CrewBase pattern with YAML config files for agents and tasks, though the inline syntax below works for quick builds.

from crewai import Agent, Task, Crew

researcher = Agent(

role="Research Analyst",

goal="Find thorough, accurate information",

backstory="You are a meticulous researcher who "

"leaves no stone unturned.",

llm=research_llm

)

writer = Agent(

role="Content Writer",

goal="Write clear, engaging, actionable reports",

backstory="You turn raw research into polished "

"prose that people actually want to read.",

llm=writer_llm

)

reviewer = Agent(

role="Quality Reviewer",

goal="Ensure accuracy and completeness",

backstory="You catch every error and weak argument "

"others miss.",

llm=reviewer_llm

)

research_task = Task(

description="Research {topic} thoroughly.",

agent=researcher

)

writing_task = Task(

description="Write a polished report based on "

"the research.",

agent=writer

)

review_task = Task(

description="Review the report for accuracy. "

"Flag issues or approve.",

agent=reviewer

)

crew = Crew(

agents=[researcher, writer, reviewer],

tasks=[research_task, writing_task, review_task],

verbose=True

)

result = crew.kickoff(inputs={"topic": "AI agent trends"})The CrewAI backstory field actually matters. A reviewer with “You catch every error others miss” produces more critical reviews than one with “You help improve content.” It’s a prompt engineering lever the framework exposes explicitly, and it’s one of CrewAI’s genuine strengths.

Both approaches produce a three-agent pipeline. LangGraph gives you more control over the flow. CrewAI gives you cleaner, more readable code. Output quality is comparable.

How does the feedback loop work?

Look at the should_revise function in the LangGraph code above. The reviewer says “APPROVED” if the draft is good, or provides specific feedback if it’s not. If not approved, add_conditional_edges routes back to the writer with the feedback. The writer function already handles this: it checks for state.get("review", "") and includes revision feedback in the prompt when present.

One revision round catches about 80% of the issues the reviewer flags. Two rounds catches 95%. Three or more rounds hit diminishing returns. Set your max at 2-3 revisions.

Always set a max. Without it, a disagreeable reviewer and an obedient writer will loop forever, burning tokens at every iteration.

What broke (and how to fix it)

Multi-agent pipelines have a failure mode that single agents don’t: cascading failures. If the researcher produces thin output, the writer has poor material to work with, and the reviewer has nothing meaningful to critique. The pipeline runs to completion with no errors, but the output is garbage.

Three specific problems I hit:

Thin researcher output. The researcher returned three paragraphs on a topic that needed three pages. The writer tried to expand thin research into a full report and hallucinated details to fill the gaps. The reviewer didn’t catch the hallucinations because it was comparing the draft against the (thin) research, which technically matched.

Fix: Validate between agents. After the researcher, check output length before passing it downstream.

def validate_research(state):

"""Reject thin research before it cascades."""

research = state.get("research", "")

if len(research.split()) < 200:

return {"research": research_llm.invoke(

f"Your research was too thin. Search at "

f"least 5 sources and include specific "

f"data points: {state['topic']}"

).content}

return stateReviewer rubber-stamping. The reviewer approved everything with generic “looks good” feedback. As useless as no reviewer at all.

Fix: Add this to the reviewer’s prompt: “You must identify at least one area for improvement in every draft, even if the draft is generally strong. If you cannot find a genuine issue, approve and explain why.” A reviewer forced to look for problems finds them.

Inter-agent contract violations. The writer returned a narrative essay when the downstream consumer expected structured sections with headers. No crash, just mismatched expectations.

Fix: Define output schemas for each agent. Even informal ones (“output must include H2 headers, bullet points, and a summary section”) prevent format drift between agents.

These validation steps add about 10 lines of code each. They transform the pipeline from “hope it works” to “catch problems before they compound.”

What should you actually do?

Here’s the decision tree for your next project:

Task is a single step (classify an email, extract data from a PDF, answer a question) -> Single agent. Multi-agent overhead isn’t worth it.

Task has 2-3 steps but they’re all the same specialty (search three sources, combine results) -> Single agent with better tools. You don’t need three agents. You need one agent with a better search tool.

Task has 3+ steps with different specialties (research, then write, then review) -> Sequential multi-agent pipeline. This is where the quality jump justifies the complexity.

Task needs “edited” quality (client-facing reports, proposals, customer emails) -> Multi-agent with feedback loop. The revision cycle is what produces polished output.

Task is complex and the decomposition isn’t obvious -> Hierarchical. But only after you’ve shipped at least one sequential pipeline. Walk before you run.

bottom_line

- Three agents beat one agent when the task has distinct stages. The quality improvement isn’t subtle. People consistently identify multi-agent output as “edited” quality without being told which is which.

- The feedback loop is where the real quality jump happens. A linear pipeline (research, write, review, done) is better than a single agent. A pipeline with revision loops is better than a linear pipeline. One revision round catches 80% of reviewer-flagged issues.

- Start with sequential, add complexity when you need it. Every multi-agent system I’ve built for clients started as a three-node pipeline. Some stayed that way. The ones that grew into parallel or hierarchical architectures grew because the use case demanded it, not because the architecture looked cooler on a whiteboard.

Frequently Asked Questions

How much more does a multi-agent pipeline cost than a single agent?+

About 2-3x more. A single research agent costs ~$0.02 per run. A 3-agent pipeline costs ~$0.05-0.06. At a $2,000/month client retainer, that's $75-90/month in API costs.

When should I use multi-agent instead of a single agent?+

When the task has 3+ steps with different specialties, or when output needs 'edited' quality. For simple tasks like classification or data extraction, single-agent is cheaper and faster.

Which framework is best for multi-agent systems?+

CrewAI has the cleanest multi-agent code. LangGraph gives more control over the flow. Both produce comparable output quality. Pick based on whether you want readability or control.

More from this Book

Build Your First AI Agent in 60 Minutes

Build a working AI research agent in 60 minutes with LangChain and LangGraph. Complete copy-paste Python code plus a no-code n8n path. $0.02 per run.

from: Build AI Agents

Deploy Your AI Agent to a Live URL in 30 Minutes

Take your Python AI agent from localhost to a public URL using FastAPI and Railway. Free tier hosting plus rate limiting and API key security included.

from: Build AI Agents

LangChain vs CrewAI vs AutoGen — One Clear Winner

Honest comparison of LangChain, CrewAI, AutoGen, and n8n from someone who built with all four. Includes a 5-question decision framework to stop second-guessing.

from: Build AI Agents