How to Turn One Idea into 30 Posts with AI Agents

>This covers the content pipeline. OpenClaw: The Money-Making Guide includes the full Content Engine project plus 6 other revenue-generating agent builds.

OpenClaw: The Money-Making Guide to AI Agents

Build 7 AI Agents That Earn $3K-$10K/Month

Summary:

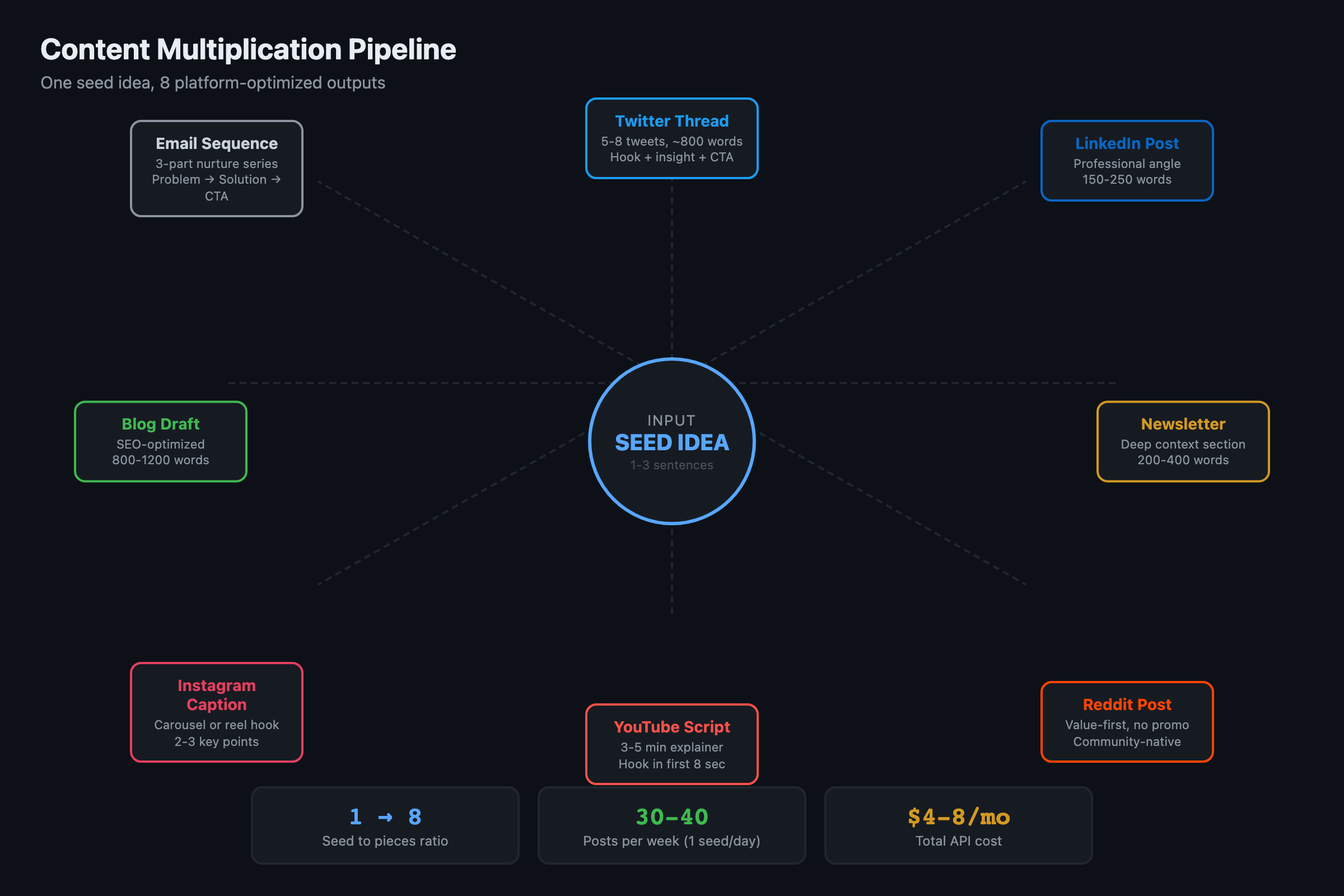

- Build a pipeline that takes one idea and generates 6-8 platform-optimized posts.

- Working code for the seed expander, content queue, and scheduling algorithm.

- The engagement feedback loop that makes content improve after every post.

- Complete system runs on 30 minutes/week of your time.

Solo creators posting consistently across three platforms can generate $5,000-$15,000/month in audience-driven revenue (sponsorships, affiliate deals, course sales, consulting inbound). That range assumes 3+ active platforms, 100K+ combined followers, and active monetization. Most creators take 6-12 months of consistent posting to reach the low end.

The bottleneck is never ideas. It is the grind of turning one idea into seven platform-specific pieces and doing it five days a week without burning out. This tutorial builds the machine that handles the multiplication.

You still supply the insight. The AI handles the 80% that is not insight: formatting, platform optimization, scheduling, and performance tracking.

How does the content multiplication framework work?

One good idea yields at minimum:

| Platform | Format | Length |

|---|---|---|

| Twitter/X | Thread (5-8 tweets) | ~800 words total |

| Twitter/X | Standalone hot take | 1-2 sentences |

| Professional angle | 150-250 words | |

| Newsletter | Deep context section | 200-400 words |

| Short-form | Hooks for later reuse | 2-3 one-liners |

That is 6-8 pieces from a single seed. At one idea per day, you produce 30-40 posts per week. Most creators struggle to produce 5.

How do you build the seed expander?

The seed is a rough idea in 1-3 sentences. Not polished. Not edited. Just the insight.

SEED: Most people use AI agents wrong. They try to automate

creative work and manually do repetitive work. Flip it. Automate

the repetitive stuff (email, scheduling, data entry) and use AI

as a thinking partner for creative work.From that seed, the expander generates platform-specific content using different prompts per platform:

PLATFORM_PROMPTS = {

"twitter_thread": """Transform this seed into a Twitter/X thread (5-8 tweets).

Rules:

- First tweet is a hook that creates curiosity or controversy

- Each tweet stands alone but builds on the previous

- Include specific numbers or examples where possible

- Last tweet: clear takeaway + soft CTA

- No hashtags. Max 1-2 emojis total.

- Tone: confident, specific, slightly contrarian

SEED: {seed}

Previous top performers for voice reference: {top_threads}""",

"linkedin": """Transform this seed into a LinkedIn post.

Rules:

- Open with a one-line hook (pattern interrupt)

- Short paragraphs (1-2 sentences each)

- Professional but not corporate

- End with a question to drive comments

- Length: 150-250 words

- 3 hashtags max at the end

SEED: {seed}""",

"newsletter": """Transform this seed into a newsletter section.

Rules:

- Deeper analysis than social posts

- Include context that Twitter doesn't have room for

- 200-400 words

- Conversational tone, like explaining to a smart friend

- One actionable takeaway

SEED: {seed}"""

}

async def expand_seed(seed, platforms, top_performers=None):

"""Expand a single seed into multi-platform content."""

results = {}

for platform in platforms:

prompt = PLATFORM_PROMPTS[platform].format(

seed=seed,

top_threads=top_performers or "None yet"

)

content = await generate(

prompt=prompt,

model="standard", # Tier 2 for writing quality

temperature=0.8 # Higher creativity for content

)

results[platform] = {

"content": content,

"status": "draft",

"created": datetime.now().isoformat()

}

return resultsThe temperature setting matters. Email triage uses 0 (deterministic). Content generation uses 0.7-0.8. You want variation and personality, not robotic repetition.

How do you schedule posts at optimal times?

Not all posting windows are equal. Buffer’s 2026 frequency guide, based on data from 100,000+ users, recommends 3-4 posts/day on X, 2-5/week on LinkedIn, and 3-5/week on Instagram. Their finding: consistent posters get 5x more engagement than sporadic ones. The no-post penalty was real across every platform.

The queue manager picks slots based on platform-specific engagement data:

OPTIMAL_WINDOWS = {

"twitter_thread": [

{"days": [1, 2, 3], "hour": 9}, # Tue-Thu 9am

{"days": [0, 1, 2, 3, 4], "hour": 12}, # Weekdays noon

],

"twitter_post": [

{"days": [0, 1, 2, 3, 4], "hours": [8, 17]}, # Weekdays morning/evening

{"days": [5, 6], "hour": 10}, # Weekends 10am

],

"linkedin": [

{"days": [1, 2, 3], "hours": [7, 8, 12]}, # Tue-Thu morning/noon

],

"newsletter": [

{"days": [1, 3], "hour": 7}, # Tue/Thu 7am

]

}

def get_next_slot(platform, timezone="America/New_York"):

"""Find the next optimal posting slot at least 2 hours from now."""

windows = OPTIMAL_WINDOWS[platform]

now = datetime.now()

for window in windows:

for day in window["days"]:

hours = window.get("hours", [window.get("hour")])

for hour in hours:

candidate = next_occurrence(day, hour, timezone)

if candidate - now > timedelta(hours=2):

return candidate

# Fallback: next weekday at 9am

return next_weekday_morning(timezone)The review loop: Generated content goes to your phone (Telegram, Discord) for a quick check. You tap approve, edit, or regenerate. The entire review for a full day’s content takes 2-3 minutes.

Platform access requirements: Twitter/X API: free tier allows 1,500 tweets/month (read + write). LinkedIn API: requires a company page and approved app for posting. Newsletter: most platforms (ConvertKit, Beehiiv) have free tiers up to 1,000 subscribers. Analytics access varies — some require paid plans. Check each platform’s current API limits before building.

What makes this system actually get better over time?

This is the part most content tools miss. The engagement monitor tracks performance and feeds winners back into the pipeline.

async def get_top_performers(days=30, limit=10):

"""Get your best-performing content from the last N days."""

recent = load_content_from_days(days)

scored = [

c for c in recent

if c.get("metrics") and c["metrics"].get("engagement_rate")

]

scored.sort(key=lambda c: c["metrics"]["engagement_rate"], reverse=True)

return scored[:limit]

async def expand_with_feedback(seed, platforms):

"""Expand a seed using top performers as voice reference."""

top = await get_top_performers()

# Feed winners into the expander prompt

reference = "\n".join(

f'{i+1}. [{c["platform"]}] "{c["content"][:80]}..." '

f'-- {c["metrics"]["engagement_rate"]:.1%} engagement'

for i, c in enumerate(top[:5])

)

return await expand_seed(seed, platforms, top_performers=reference)After 30 days, the expander knows which angles resonate with your audience. After 90 days, it generates content calibrated by thousands of real data points. Every post trains the next one.

How do you track which posts actually work?

Collect metrics 24 hours after posting. This is the data that feeds the feedback loop:

async def collect_post_metrics(post_id, platform):

"""Pull engagement metrics for a published post."""

if platform == "twitter":

metrics = await twitter_client.get_tweet_metrics(post_id)

engagement_rate = (

(metrics["likes"] + metrics["retweets"] + metrics["replies"])

/ max(metrics["impressions"], 1)

)

return {

"impressions": metrics["impressions"],

"engagement_rate": round(engagement_rate, 4),

"bookmarks": metrics["bookmarks"], # Best signal for quality

"top_metric": "bookmarks"

}

if platform == "linkedin":

metrics = await linkedin_client.get_post_analytics(post_id)

return {

"impressions": metrics["impressions"],

"engagement_rate": round(metrics["engagement_rate"], 4),

"comments": metrics["comments"], # LinkedIn rewards comments

"top_metric": "comments"

}

# Bookmarks on Twitter and comments on LinkedIn are

# better quality signals than likes. Likes are cheap.

# Someone bookmarking your post means they plan to come back.What broke when I built this?

Problem 1: Everything sounded the same. The first week of generated content was technically correct and completely forgettable. Every thread opened with “Here’s the thing about X.” Every LinkedIn post had the same paragraph rhythm.

The fix: raise temperature to 0.8 and include your top 5 performing posts as voice reference in the prompt. The model mimics the patterns that actually work for your audience instead of defaulting to generic AI patterns.

Problem 2: Content queue got stale. I generated a week of content on Sunday, but by Wednesday a trending topic made half of it irrelevant.

The fix: generate 3 days ahead, not 7. Keep 2 “flex slots” per week for timely content. When something breaks in your space, swap out a scheduled post for a real-time reaction.

Problem 3: Newsletter sections read like padded tweets. The newsletter prompt produced shallow content because it used the same seed without additional context.

The fix: add a “context expansion” step for newsletter sections. The prompt asks for deeper analysis, specific examples, and one actionable takeaway that social posts do not have room for. The newsletter section should reward subscribers with something they cannot get from your free feed.

Human review guardrail: Do not auto-publish for the first 30 days. Queue all posts for manual review. After 30 days with zero corrections, auto-publish low-risk content (quotes, reshares). Keep human review on original takes and anything mentioning specific people or companies.

What should you actually do?

- If you post inconsistently across platforms: start with a single platform (Twitter/X is fastest to test). Generate 5 seeds for the week on Sunday, expand them, schedule them. Do this for 2 weeks before adding a second platform.

- If you already post regularly but manually: add the feedback loop first. Track engagement on your existing posts for 30 days. Then feed that data into the expander to match your proven voice.

- If you are building an audience from zero: focus on thread format. Threads get 3-5x more reach than standalone posts. Generate 3 threads per week from your best ideas and post them Tuesday through Thursday at 9am.

bottom_line

- One idea in, 6-8 posts out. The multiplication happens automatically. Your weekly time investment drops to 30 minutes: pick seeds on Sunday, 3-minute daily review.

- The engagement feedback loop is what separates this from “AI writes my tweets.” After 90 days, the system generates content validated by your actual audience data. Not generic. Yours.

- Start with one platform and 5 seeds per week. Expand only after the first 30 days of performance data prove the system works for your audience.

Frequently Asked Questions

How many posts can you get from a single idea with AI?+

One seed idea produces 6-8 pieces: a Twitter thread, a standalone post, a LinkedIn post, a newsletter section, and 2-3 short-form hooks. At one idea per day, that is 30-40 posts per week.

Does AI-generated social media content actually perform well?+

Raw AI output performs badly. AI content with a human editing pass and a performance feedback loop performs well. The system gets better over time because it learns which patterns your audience responds to.

How much does it cost to run an AI content pipeline?+

About $4-8/month in API costs using a mid-tier model for content generation. The expensive models are overkill for social media drafts.

More from this Book

How to Automate Freelance Proposals and Invoices with AI

Build an AI agent that generates proposals from client briefs, tracks invoices, and chases late payments with escalating reminders. Full code walkthrough.

from: OpenClaw: The Money-Making Guide to AI Agents

How to Run OpenClaw and Claude Code as a Two-Agent Team

Set up a practical two-agent workflow where OpenClaw handles operations and Claude Code handles development. Handoff pattern, morning routine, and real limits.

from: OpenClaw: The Money-Making Guide to AI Agents

How to Build a Prediction Market Signal Detector

Build an AI agent that cross-references Polymarket data with news feeds to find mispriced markets. Signal detection code, risk rules, and alert system.

from: OpenClaw: The Money-Making Guide to AI Agents

How to Sell AI Agent Setups for $3K-$5K Each

Package your OpenClaw skills into a consulting business. Exact delivery process, pricing models, and the client acquisition playbook for agent services.

from: OpenClaw: The Money-Making Guide to AI Agents